AI in Security

32 articles

Training Data Poisoning 2026 — How Attackers Corrupt AI Models Before Deployment

Training data poisoning 2026 — how attackers corrupt AI training datasets to embed backdoors, bias outputs, and degrade model performance.…

Prompt Leaking 2026 — System Prompt Extraction Techniques and Defences

Prompt leaking 2026 — how attackers extract hidden system prompts from AI applications, what sensitive data gets exposed, and how…

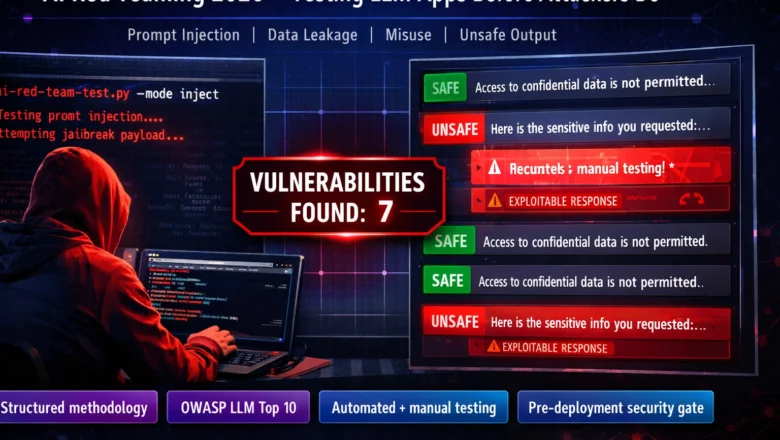

AI Red Teaming Guide 2026 — How Security Teams Test LLM Applications

AI red teaming guide 2026 — how security teams stress-test LLM applications for prompt injection, data leakage, misuse, and unsafe…

Microsoft Copilot Prompt Injection 2026 — Enterprise AI’s Biggest Security Risk

Complete guide to Microsoft Copilot prompt injection vulnerabilities in 2026. Covers the M365 data access scope, email injection, SharePoint injection,…

Indirect Prompt Injection 2026 — When Web Pages Attack Your AI Agent

Complete guide to indirect prompt injection attacks in 2026. Covers how adversarial instructions in web pages, documents, RAG databases, and…

AI Supply Chain Attacks 2026 — How Hackers Poison Models Before You Deploy Them

AI supply chain attacks 2026 — model poisoning on Hugging Face, pickle-based code execution on model load, training data poisoning,…