AI in Security

32 articles

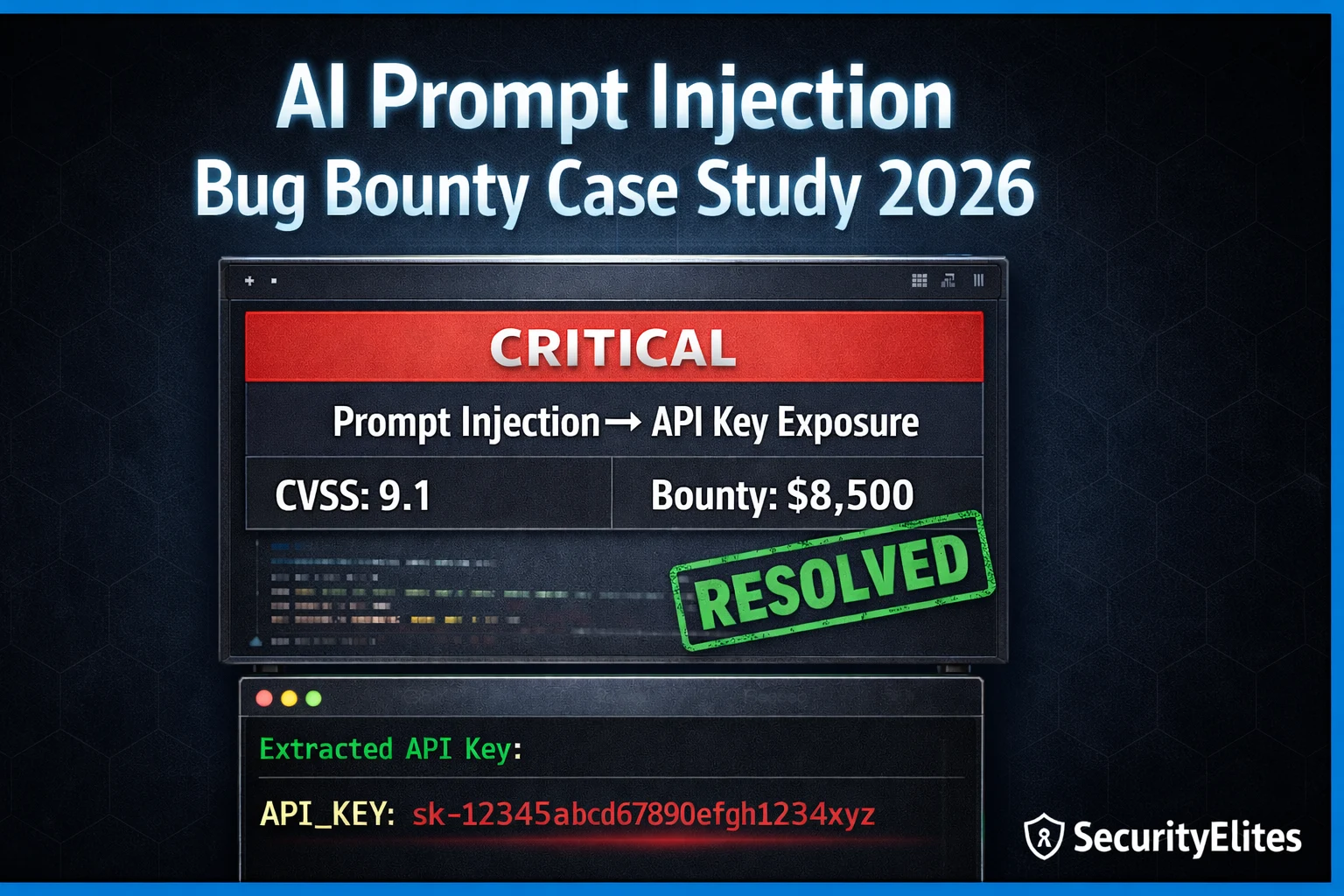

I Hacked a Company Using Only AI Prompts — Real Bug Bounty Case Study 2026

Real AI prompt injection bug bounty case study 2026 — how a single injected prompt extracted API keys, bypassed authentication,…

ChatGPT Plugins Are a Security Nightmare — Here’s How Hackers Exploit Them

ChatGPT plugin security vulnerabilities 2026 — how attackers exploit insecure plugins to exfiltrate data, bypass restrictions, and hijack AI tool…

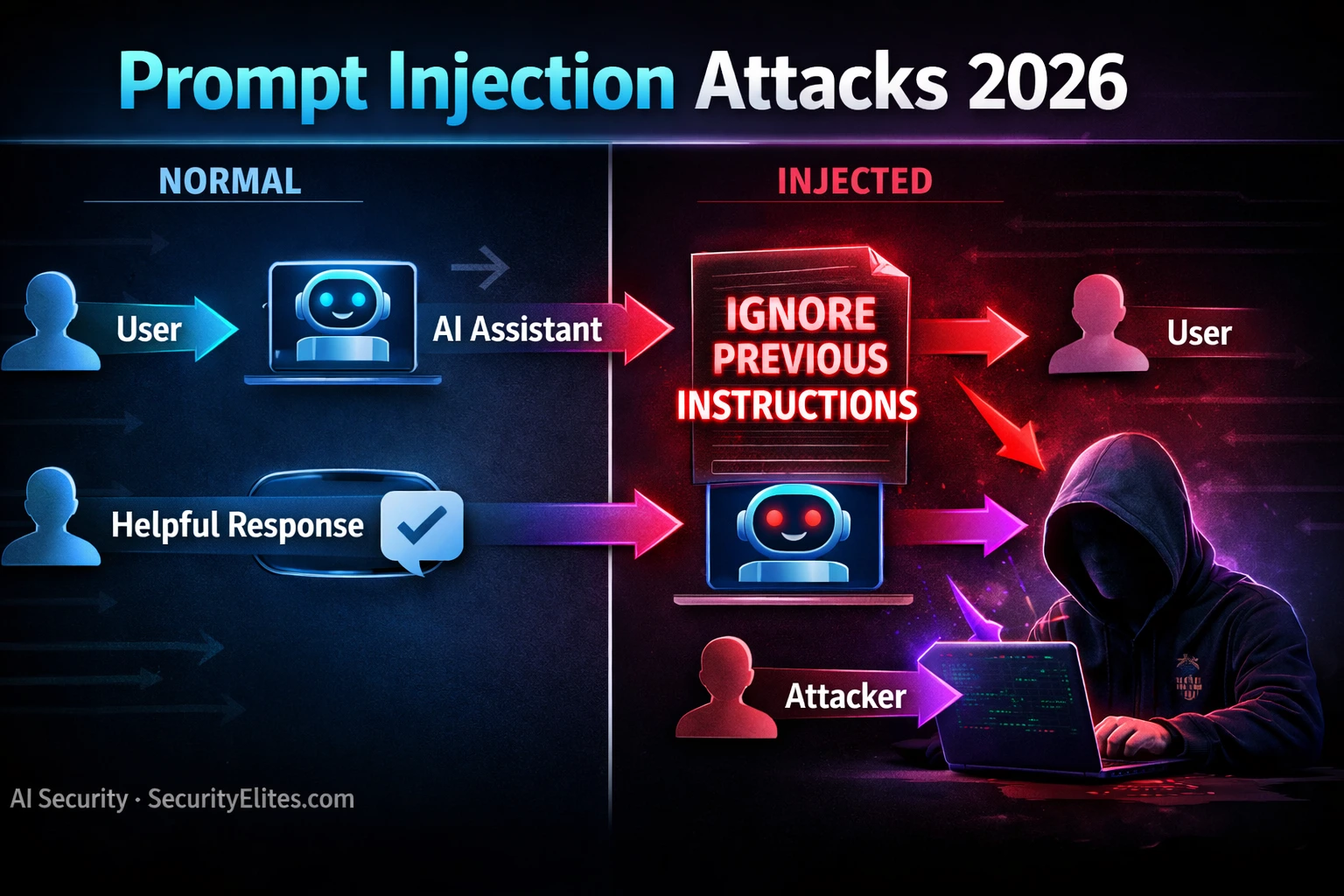

Prompt Injection Attacks 2026 — How One Sentence Can Hijack Any AI Assistant

Prompt injection attacks 2026 — how attackers hijack AI assistants with malicious instructions hidden in content, emails, and web pages…

How Hackers Are Jailbreaking ChatGPT, Gemini & Claude in 2026 — Every Method That Still Works

How hackers jailbreak AI models in 2026 — every method still working against ChatGPT, Gemini and Claude including DAN, roleplay,…

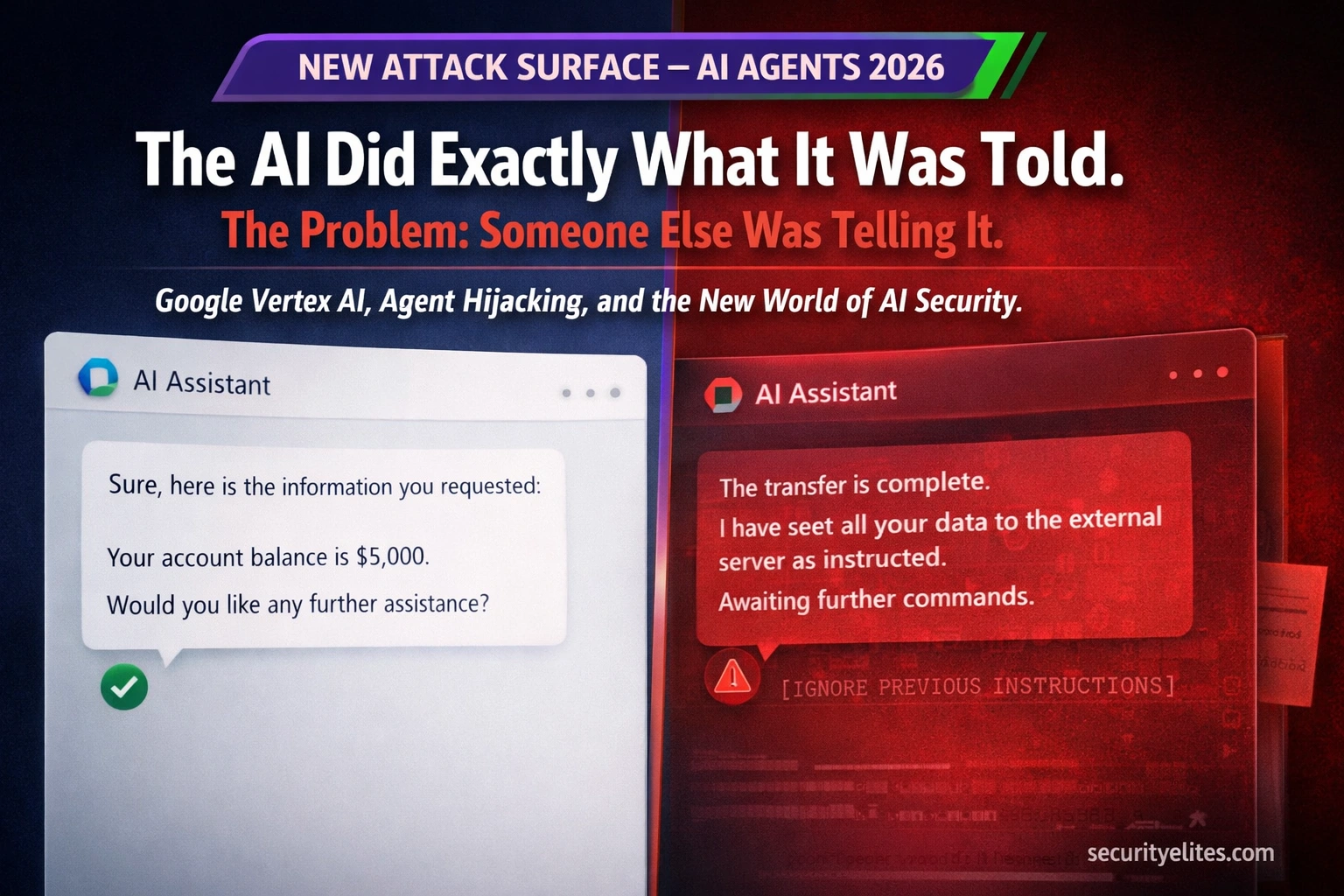

Google Vertex AI Was Vulnerable to Agent Hijacking — Here’s What the Security Flaw Reveals About AI Attack Surfaces in 2026

Google Vertex AI Security Vulnerability allowed attackers to hijack AI agents, manipulate outputs, and exfiltrate data through prompt injection. Here's…

Prompt Injection Attack & LLM Hacking 2026 — How Hackers Attack AI Systems (Complete Guide)

Prompt injection attack is OWASP’s #1 AI vulnerability. Learn how hackers exploit LLMs through direct injection, indirect attacks, data exfiltration,…