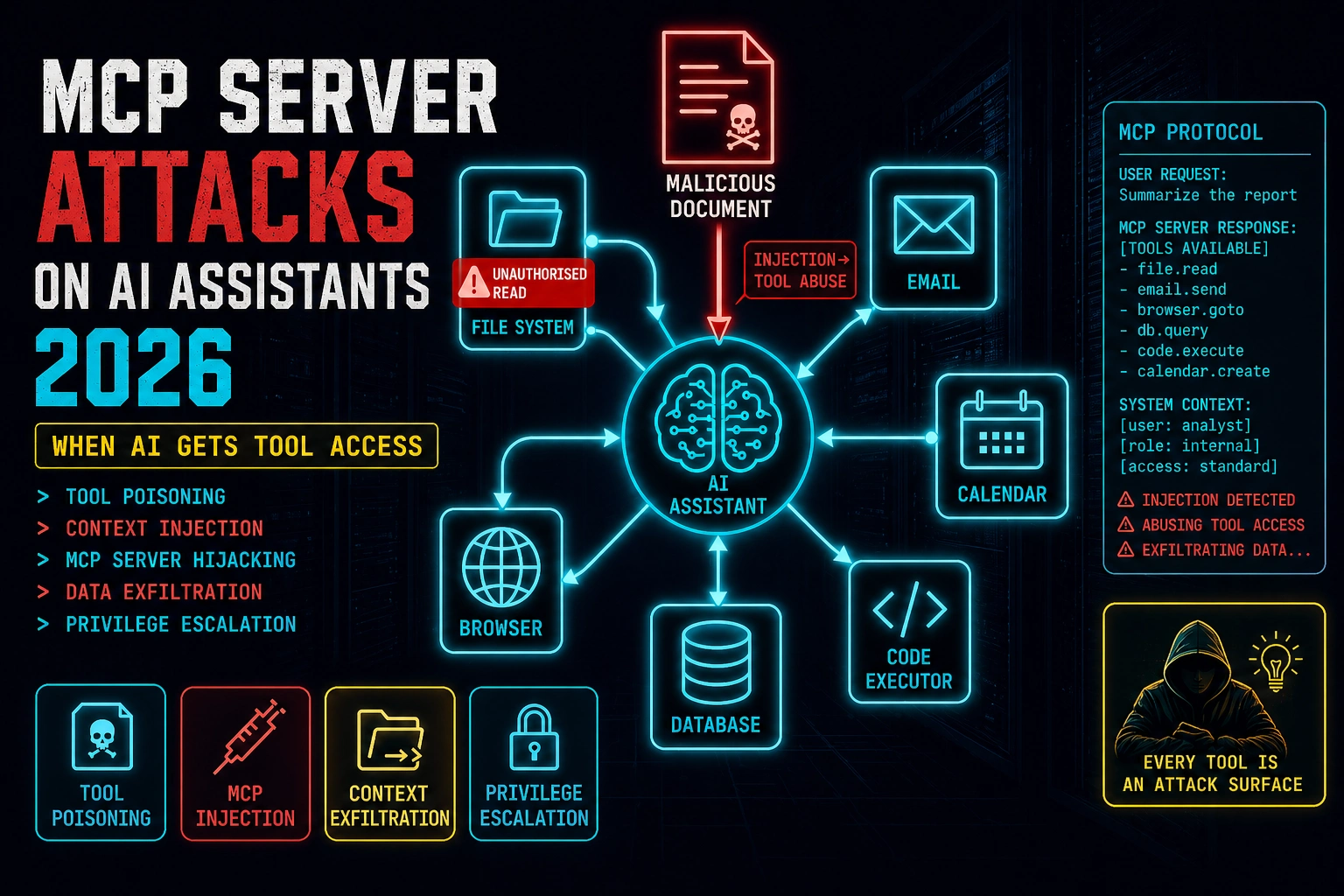

That scenario — an injected instruction in external content causing an AI with tool access to take an unintended high-impact action — is the core MCP security risk in 2026. The Model Context Protocol has made AI assistants genuinely useful by giving them the ability to interact with real systems. It’s also created an attack surface where prompt injection doesn’t just produce wrong text — it produces wrong actions, with the AI’s authorised access to your files, email, calendar, and code execution environment.

🎯 After This Article

⏱️ 20 min read · 3 exercises

📋 MCP Server Attacks on AI Assistants in 2026 – Contents

MCP — What Tool Access Actually Means for Security

The Model Context Protocol is an open standard from Anthropic that defines how AI assistants connect to external tools and data sources. An MCP server exposes a set of tools — capabilities the AI can invoke: read a file, send an email, search a database, execute code, fetch a URL, write to a calendar. The AI assistant uses these tools autonomously during task completion, calling them with arguments it determines based on context.

The security implication is a direct consequence of how this works. When you ask an AI assistant to “handle today’s emails,” it uses the email MCP server to read your inbox, compose replies, and send them — actions with real consequences. If that AI is manipulated via prompt injection to take a different action, the injection doesn’t produce wrong text. It produces wrong actions: files read, emails sent, code executed, API calls made — all with the AI’s full authorised access.

This is the qualitative difference between prompt injection against a text-only AI and prompt injection against an AI with MCP tool access. Text-only injection produces output the user can evaluate and discard. MCP injection can act before the user knows anything happened.

Run arbitrary code → any injection achieves RCE in execution environment

Critical

Read/write files → credentials, keys, data exfiltration, persistence

Critical

Send messages, create events on user’s behalf → phishing, social eng

Critical

Interact with authenticated sessions → credential reuse, web actions

High

Query and write → data access limited to connected DB scope

High

Read-only access to scoped data — lower impact but still exfiltration surface

Medium

MCP Tool Poisoning — Injecting Through Tool Descriptions

When an AI assistant connects to an MCP server, the server sends tool definitions to the AI — names, descriptions, and parameter schemas for each tool it exposes. These tool definitions are included in the AI’s context (effectively the system prompt) before the user’s conversation begins. A malicious MCP server can include adversarial instructions in its tool descriptions, attempting to alter the AI’s behaviour before any user interaction occurs.

This attack is analogous to a malicious software dependency that modifies behaviour at import — the AI “loads” the MCP server’s tool definitions and those definitions can include instructions that compete with or override the AI’s safety training. The risk is highest for third-party MCP servers installed from registries without code review, and for enterprise AI deployments where employees can connect their own MCP servers without centralised vetting.

⏱️ 15 minutes · Browser only

MCP security research is moving fast — the protocol is relatively new and the security community is actively mapping the attack surface. Knowing where the current research sits tells you which attack patterns are well-documented vs which are still emerging.

Go to: modelcontextprotocol.io

Find the security considerations section.

What threat models does Anthropic document for MCP?

What trust principles does the documentation establish?

Step 2: Find MCP security research

Search: “MCP security vulnerabilities prompt injection tool poisoning 2024 2025”

What specific attack patterns have been demonstrated?

Which researchers have published on MCP security?

Step 3: Check the MCP server registry for third-party servers

Search: “MCP server registry awesome-mcp-servers GitHub”

Browse the available third-party MCP servers.

Pick 3 MCP servers that interest you.

For each: what tools does it expose? What access does it require?

Would you install any of these without reading the source code?

Step 4: Find Claude Desktop MCP configuration

Search: “Claude Desktop MCP configuration claude_desktop_config.json”

Where is this file stored on Windows/macOS/Linux?

What format does it use to configure MCP servers?

What controls exist to restrict which MCP servers can be connected?

Step 5: Research the “confused deputy” problem in MCP

Search: “confused deputy problem MCP AI tool access”

How does the confused deputy concept apply to AI with MCP tool access?

What does this mean for how MCP tool outputs should be treated?

📸 Screenshot the MCP security documentation and share in #ai-security.

Context Injection via MCP Tool Outputs

The most immediately dangerous MCP attack pattern doesn’t require a malicious MCP server. It uses legitimate MCP tools — file readers, web fetchers, document processors — to deliver adversarial instructions into the AI’s context through external content the user processes normally.

The attack chain: an attacker embeds prompt injection payloads in a file, webpage, or document they know the target will process through their AI assistant’s MCP tools. When the AI reads the file to summarise it, fetch the webpage for research, or process the document for analysis, the injected instructions arrive in the AI’s context as tool output. A model that treats tool output as trusted instruction context — rather than untrusted external data — may follow those instructions with its full tool access.

The specific risk depends on which tools are available and what the injected instruction requests. With a filesystem MCP server, an injection can attempt to read sensitive files. With an email MCP server, it can attempt to send data externally. With a code execution server, it can attempt to run arbitrary code. The same injection payload that produces a harmless wrong answer from a text-only AI becomes a high-impact tool invocation from an AI with MCP access.

Tool Chaining — The Privilege Escalation Path

Individual MCP tool calls are often scoped to appear low-risk — read a file, query a database, fetch a URL. Tool chaining combines sequential calls to cross trust boundaries that individual calls couldn’t. The classic pattern: read a credential file (low-blast-radius tool call) → use the credential to authenticate to an API (medium) → call the API to exfiltrate data or make privileged changes (high). Each call individually appears within normal scope. The chain achieves what no single call could justify.

Tool chaining is most dangerous when the AI executes multi-step plans autonomously — when an agentic AI decides on a sequence of tool calls to complete a task without per-step user confirmation. In this mode, an injected instruction that specifies a multi-step plan can cause the full chain to execute before any human review occurs.

⏱️ 15 minutes · No tools — threat modelling only

The tool chain attack is most intuitive when you work through a specific realistic scenario. The details you surface are the gaps a real attacker would exploit in this exact configuration.

Developer uses Claude Desktop with these MCP servers:

– Filesystem MCP: read/write access to ~/Projects/ and ~/Documents/

– GitHub MCP: read/write access to all personal repos

– Email MCP: read/write access to Gmail

– Browser MCP: fetch URLs, interact with web pages

Developer’s daily workflow includes:

“Summarise README files for repos I’ve starred this week”

“Read through PRs that mention security and summarise”

“Research [topic] and draft an email summary to send my team”

QUESTION 1 — Injection Surface

Which of the developer’s daily workflows introduces

external content into the AI’s context?

For each: who controls that external content?

QUESTION 2 — Highest-Risk Chain

Design the highest-impact tool chain achievable through

a malicious README or PR description.

Start: attacker controls a GitHub repo the developer stars.

What’s your injection payload?

What tool chain does it trigger?

What’s the end state?

QUESTION 3 — Detection Gap

After the tool chain executes:

What appears in the developer’s email Sent folder?

What appears in the AI’s conversation history?

What appears in GitHub audit logs?

Is there a moment where the developer could notice something wrong?

What would “something wrong” look like vs normal AI activity?

QUESTION 4 — Blast Radius

What data could the attacker access through this chain?

(Think: what’s in ~/Projects/ for a security-focused developer?)

What’s the impact to the developer? To their employer?

QUESTION 5 — Minimum Viable Defence

What single change to the developer’s MCP configuration

would most reduce the blast radius of this attack?

📸 Write your injection payload for QUESTION 2 and share in #ai-security.

MCP Security Assessment Methodology

Assessing an MCP deployment for security isn’t fundamentally different from assessing any system with privilege access — you map the access scope, model the worst-case tool chain scenarios, test the injection surfaces, and verify the controls. The MCP-specific elements are: the trust model for tool output, tool description sanitisation, and per-step confirmation requirements for high-impact operations.

For enterprises deploying AI assistants with MCP tool access at scale, the assessment should precede deployment. The key questions: which MCP servers are permitted, who can connect new servers, what confirmation is required before high-impact tool calls, and how are tool invocations logged for incident response. A Copilot-style deployment with email and file access and no per-step confirmation for sensitive operations represents significant unaddressed risk regardless of how robust the AI’s safety training is.

⏱️ 15 minutes · Browser + GitHub access

The best way to understand MCP security risk concretely is to read actual MCP server source code and map the capabilities. Pick one server you might plausibly want to use and work through the full security profile.

Search GitHub: “mcp-server filesystem” or “mcp-server gmail” or “mcp-server github”

Select one with significant stars that you’d consider using.

Step 2: Read the tool definitions

Find where tool names and descriptions are defined in the source.

List every tool the server exposes.

For each tool: what arguments does it accept? What does it do?

Step 3: Map the access scope

What system access does this MCP server require?

(Filesystem paths, API credentials, network access, etc.)

What’s the maximum blast radius if this server received injected tool calls?

Step 4: Check the tool descriptions for injection risk

Do any tool descriptions contain text that could be interpreted as instructions?

Is there anything in the descriptions that an AI might follow as directives?

Step 5: Assess the confirmation model

Does the server require any user confirmation before executing sensitive operations?

If a malicious injection called the most dangerous tool with attacker-specified arguments,

would the user see any warning or confirmation prompt?

Step 6: Write a one-paragraph security assessment

Summarise: what this server does, its access scope, the highest-risk

tool chain it enables, and the one control that would most reduce risk.

📸 Share your MCP server security assessment in #ai-security. Tag #MCPSecurity

✅ Tutorial Complete — MCP Server Attacks on AI Assistants 2026

Tool poisoning, context injection, tool chaining, and MCP security assessment methodology. The attack surface grows with every new MCP server connected — each one extends the AI’s reach and the blast radius of a successful injection. Next tutorial covers LLM fuzzing: the systematic methodology for finding injection vulnerabilities in AI systems before attackers do.

🧠 Quick Check

Frequently Asked Questions

What is MCP and why does it create security risks?

What is MCP tool poisoning?

How does prompt injection work through MCP tool outputs?

What are the highest-risk MCP server types?

How should organisations secure MCP deployments?

Is MCP secure by design?

AI Hallucination Attacks 2026

LLM Fuzzing Techniques 2026

📚 Further Reading

- Indirect Prompt Injection Attacks 2026 — The injection class MCP attacks instantiate at scale — how adversarial instructions in external content direct AI behaviour, foundational for understanding the MCP threat model.

- Prompt Injection in Agentic Workflows 2026 — In next article — when AI agents execute multi-step plans autonomously, MCP tool chaining becomes agentic injection. The convergence of MCP and agentic AI is the next evolution of this attack surface.

- Microsoft Copilot Prompt Injection 2026 — Enterprise-scale deployment of the same threat model: AI with broad data access processing external content. Copilot’s M365 integration is functionally a managed MCP deployment at enterprise scale.

- Official MCP Security Documentation — Anthropic’s authoritative security guidance for MCP deployments — the trust model, threat scenarios, and recommended controls for building and deploying MCP servers responsibly.

- Official MCP Servers Repository — Anthropic’s reference MCP server implementations — the authoritative source for understanding how MCP servers are structured and the security patterns reference implementations use.

1 Comment