That research marked the moment model inversion and training data extraction moved from theoretical privacy concern to demonstrated attack class. The question for organisations deploying or training AI systems in 2026 is no longer “is this possible?” It’s “what did this model train on, how much of it is memorised, and what are the privacy consequences when an attacker queries it systematically?”

🎯 After This Article

⏱️ 20 min read · 3 exercises

📋 Model Inversion Attacks – Contents

Model Inversion — The Attack Taxonomy

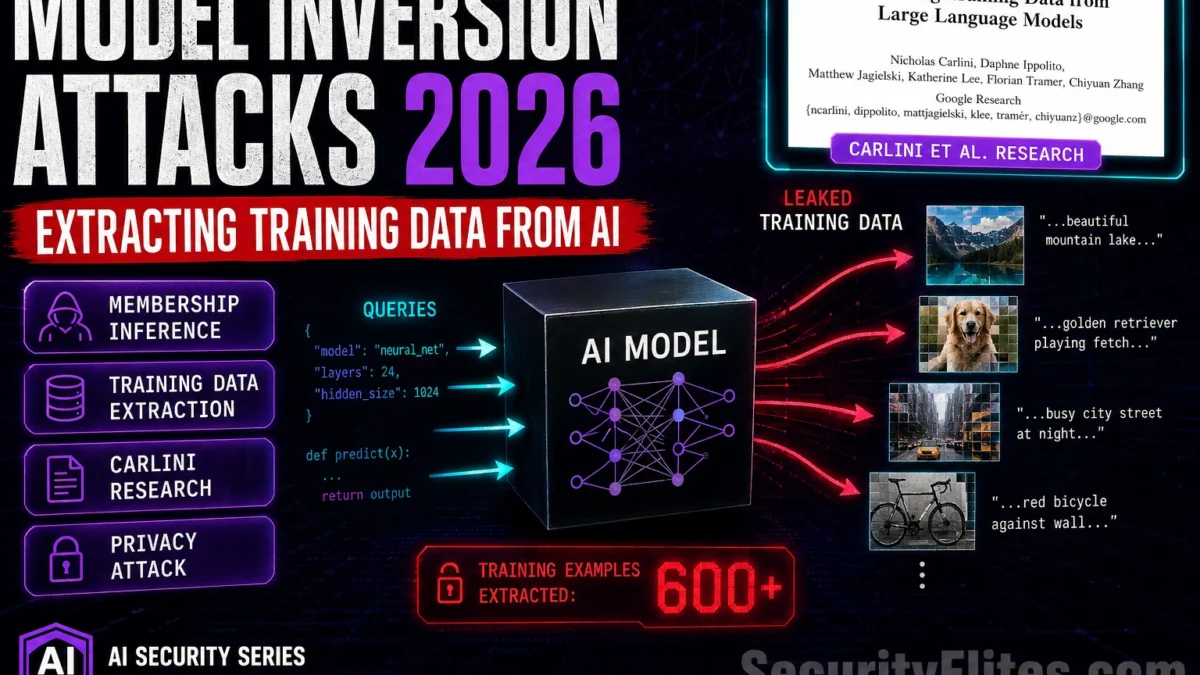

My threat model work on AI privacy starts here — understanding the attack taxonomy before moving to specific techniques. My threat model work on AI privacy almost always starts here. Model inversion attacks span a spectrum that I find broader than most practitioners realise from classical ML attacks against classifiers to modern training data extraction from large language models. The common thread is that AI models implicitly encode information about their training data in their weights — and that encoding can be partially reversed through careful querying.

Classical model inversion targets classification models: given black-box access (query-response), an attacker optimises inputs to maximise class confidence, reconstructing a representative “average” of each class. Applied to a facial recognition model trained on private photos, this reconstructs average faces for each individual class. Applied to a medical diagnostic model, it reconstructs the average patient profile for each diagnostic category — potentially revealing population-level patterns from private medical data.

LLM training data extraction is the modern variant: systematically sampling from a language model’s output distribution to find sequences the model reproduces verbatim from training data. This is more targeted — looking for specific memorised examples rather than representative averages — and more directly privacy-threatening, since verbatim reproduction of training data means the attacker has recovered the actual training content, not a statistical approximation.

The Carlini et al. LLM Extraction Research

The Carlini et al. paper is the one I cite most often when I need to move a sceptical team from ‘theoretical concern’ to ‘documented attack’. The paper I cite most in AI privacy briefings — the 2021 Carlini et al. paper “Extracting Training Data from Large Language Models” is the foundational research that moved LLM memorisation from theoretical concern to demonstrated, quantified attack. The methodology is conceptually simple: generate a large number of samples from the model (Carlini used GPT-2, generating 600,000 samples), deduplicate them to get unique outputs, and compare those outputs against the training corpus to identify verbatim matches.

Their key findings: GPT-2 had memorised and would reproduce verbatim text including personally identifiable information — specific individuals’ names with email addresses and phone numbers, verbatim code snippets from GitHub, specific private content from the training web crawl. The memorisation rate was higher for: data that appeared multiple times in training (duplication increases memorisation), data near the beginning or end of training documents, and longer models (larger GPT-2 variants memorised more than smaller ones).

Subsequent research by Carlini et al. in 2022 extended this to GPT-Neo and other models, confirming that memorisation is a general property of LLMs at scale, not an artefact of GPT-2’s specific training. The extractable rate increases with model size and decreases with training data diversity and deduplication — critical implications for organisations training models on smaller, less diverse proprietary datasets where the memorisation risk is concentrated.

⏱️ 15 minutes · Browser only

Reading the primary research directly gives you the methodology, findings, and implications in the researchers’ own words — which is more precise than any summary. This is the foundational paper for LLM privacy attack research.

Search: “Extracting Training Data from Large Language Models Carlini 2021”

Find the paper on arXiv or the published version.

Read the abstract and Section 1 (Introduction).

What was the most significant PII finding from their GPT-2 extraction?

Step 2: Understand their methodology

Read Section 3 (Approach) or the methodology section.

What is “greedy decoding” and why does it produce higher memorisation extraction?

How did they verify that extracted text was actually in training data?

Step 3: Find the follow-up 2022 research

Search: “Quantifying Memorization Across Neural Language Models Carlini 2022”

How did memorisation scale with model size in their findings?

What was the memorisation rate difference between small and large models?

Step 4: Find LLM memorisation research from 2024-2025

Search: “LLM training data extraction GPT-4 memorisation 2024”

Search: “ChatGPT training data memorisation privacy research”

Has similar extraction been demonstrated on more recent models?

What defences have model providers implemented?

Step 5: Find industry response — how do AI providers address memorisation?

Search: “OpenAI Anthropic training data memorisation privacy mitigation”

What technical measures do providers describe for reducing memorisation?

What does their documentation say about training data privacy?

📸 Screenshot the most significant PII finding from the Carlini et al. research and share in #ai-security.

Membership Inference Attacks

Membership inference is the attack I recommend every AI team test first — it’s faster than full extraction and reveals real privacy exposure. Membership inference is the attack I recommend every AI team test first — it’s faster to run than full extraction and almost always reveals something. Membership inference is the attack I recommend testing first — weaker than full extraction but more scalable privacy attack than full training data extraction. The attacker doesn’t need to reconstruct training content — they just need to determine whether a specific record was in the training set. This is a privacy violation in its own right: confirming that a patient’s record was in a medical AI’s training set, or that a person’s browsing history was in a recommender system’s training data, reveals sensitive facts without recovering the underlying data.

The attack methodology exploits the statistical difference in how models treat data they’ve “seen” versus data they haven’t. Models generally assign higher confidence and lower loss to training examples than to held-out examples — a consequence of overfitting that persists even in large, well-regularised models. A classifier trained on medical records may assign slightly higher diagnostic confidence to records it trained on than to structurally similar records it didn’t. An attacker with access to confidence scores can exploit this difference to infer training membership.

⏱️ 15 minutes · No tools — risk analysis only

The privacy risk of model inversion and memorisation attacks is not uniform — it depends heavily on what data the model trained on, how it was trained, and how it’s deployed. Working through the risk factors concretely reveals which deployments need differential privacy and which have lower inherent risk.

For each: identify the primary risk factor and the highest-priority mitigation.

DEPLOYMENT A: GPT-4 (OpenAI API) deployed as a customer service chatbot.

Training data: massive diverse internet crawl (unknown to deployer).

Fine-tuning: none — deployed as-is via API.

Data the deployment processes: customer queries (no fine-tuning on these).

DEPLOYMENT B: A medical AI fine-tuned on 50,000 patient records

to predict readmission risk. Deployed internally to hospital staff.

Fine-tuning dataset: contains names, DOBs, diagnoses, medications.

DP training: not applied.

DEPLOYMENT C: A code completion model fine-tuned on a company’s

private codebase (1M lines). Deployed to all 500 developers.

Fine-tuning dataset: includes internal API keys, database schemas,

proprietary algorithms in comments.

DEPLOYMENT D: A sentiment analysis classifier trained on 10M public

tweets to predict brand sentiment. No PII in training data.

DP training: not applied.

DEPLOYMENT E: Same as B (medical AI), but trained with DP-SGD

at epsilon=5. Otherwise identical deployment.

QUESTION 1 — Risk Ranking

Rank A through E from highest to lowest memorisation/extraction risk.

Explain the primary risk factor for each.

QUESTION 2 — Regulatory Exposure

Which deployments have GDPR or HIPAA privacy compliance implications?

If an attacker performs membership inference on Deployment B and

confirms that specific named patients were in the training set —

is that a breach even without recovering the actual patient records?

QUESTION 3 — Mitigation Priority

For your highest-risk deployment: what’s the single most impactful

technical control that should be applied before deployment?

QUESTION 4 — Right to Erasure Challenge

A patient whose records were in Deployment B’s training set

submits a GDPR right-to-erasure request.

Can the hospital comply? What are the technical options?

📸 Share your risk ranking with reasoning in #ai-security. Disagree with someone else’s ordering?

Differential Privacy — The Mathematical Defence

Differential privacy is the defence mechanism I evaluate most carefully — the implementation details determine whether it actually provides protection. Differential privacy provides the only current technique with formal mathematical guarantees against memorisation and membership inference. DP-SGD (differentially private stochastic gradient descent) adds calibrated Gaussian noise to the gradient updates during training, ensuring that the trained model cannot be more different than a bounded amount whether any individual training example was included or excluded. An attacker querying a DP-trained model cannot determine training membership to better than the bound set by the epsilon parameter.

The practical challenge is the accuracy-privacy tradeoff. Smaller epsilon (stronger privacy guarantee) requires more noise, which degrades model accuracy. For many sensitive applications — medical AI, financial risk models — the accuracy cost of meaningful DP protection (epsilon < 1) is significant enough to affect deployment utility. Most production applications that use DP operate at epsilon values in the range of 1–10, providing some protection but not the strongest theoretical guarantee.

⏱️ 15 minutes · Browser only

Understanding differential privacy implementation goes from theoretical to practical when you see the actual frameworks used in production. The tooling is more accessible than its academic foundations suggest.

Search: “TensorFlow Privacy DP-SGD GitHub”

What does DP-SGD actually modify in the training loop?

What parameters does the library require you to set?

What epsilon value does Apple use for iOS differential privacy data collection?

Step 2: Find Opacus (Meta’s DP training library for PyTorch)

Search: “Opacus PyTorch differential privacy GitHub”

How does Opacus differ from TensorFlow Privacy in implementation?

What’s the minimum code change to add DP training to a PyTorch model?

Step 3: Research the accuracy-privacy tradeoff empirically

Search: “differential privacy epsilon accuracy tradeoff LLM fine-tuning 2024”

What accuracy loss do research papers report for DP fine-tuning at epsilon=1?

At epsilon=8 (Apple’s value for some features)?

Is DP fine-tuning of LLMs practically feasible at epsilon < 3?Step 4: Find model unlearning research (for right-to-erasure)

Search: "machine unlearning LLM GDPR right to erasure 2024 2025"

Is there a practical solution to removing specific training examples from a trained model?

What does current research say about the feasibility of exact unlearning for LLMs?Step 5: Research the EU AI Act and training data privacy

Search: "EU AI Act training data privacy memorisation 2024"

Does the EU AI Act address memorisation or training data privacy?

What obligations does it create for high-risk AI system training data?

📸 Share what you found about epsilon-accuracy tradeoffs in #ai-security. Tag #DifferentialPrivacy

📋 Key Commands & Payloads — Model Inversion Attacks 2026 — Extracting Training

✅ Article 32 Complete — Model Inversion Attacks 2026

Model inversion, training data extraction, membership inference, differential privacy, and the right-to-erasure challenge. The Carlini et al. research established memorisation as a real, measurable property of deployed LLMs. DP training provides the mathematical bounds but at an accuracy cost that constrains adoption. Article 33 covers AI worms — self-propagating malware that uses LLMs as propagation engines.

🧠 Quick Check

❓ Frequently Asked Questions

What is a model inversion attack?

What is the Carlini et al. training data extraction research?

What is membership inference in machine learning?

Do large language models memorise training data?

How does differential privacy reduce model inversion risk?

What is the privacy risk of fine-tuning AI models on sensitive data?

Article 31: AI Application API Key Theft

Article 33: AI Worms — Self-Propagating LLM Malware

📚 Further Reading

- Training Data Poisoning Attacks 2026 — Article 18 — the offensive counterpart to memorisation: poisoning training data to influence model behaviour, vs extraction attacks that recover training data. Both exploit the training data-model relationship.

- AI Supply Chain Attacks 2026 — Article 13 — the broader supply chain context: model inversion attacks are one attack class within the AI supply chain threat model, targeting the training data that flows into deployed models.

- LLM Membership Inference & Privacy 2026 — Article 88 — a dedicated deep-dive into membership inference methodology, attack variants, and current defences beyond differential privacy.

- Carlini et al. 2021 — Extracting Training Data from LLMs (arXiv) — The foundational research paper demonstrating verbatim training data extraction from GPT-2 — the primary source for understanding LLM memorisation as a demonstrated attack class.

- Opacus — PyTorch Differential Privacy Library — Meta’s open-source DP training library for PyTorch — the practical implementation tool for adding differential privacy to fine-tuning pipelines, with documentation on epsilon-accuracy tradeoffs.