No malicious intent. No policy violation awareness. Just a productivity tool that had quietly become a channel for some of the most sensitive documents in the organisation to flow to an external AI provider’s servers under consumer-tier data handling terms.

That’s shadow AI. And it’s happening in virtually every organisation right now — not because employees are careless, but because AI tools are genuinely useful and AI governance has lagged years behind AI adoption.

🎯 After This Tutorial

⏱️ 18 min read · 3 exercises

📋Shadow AI Security Risks – Contents

Shadow AI Risk Taxonomy

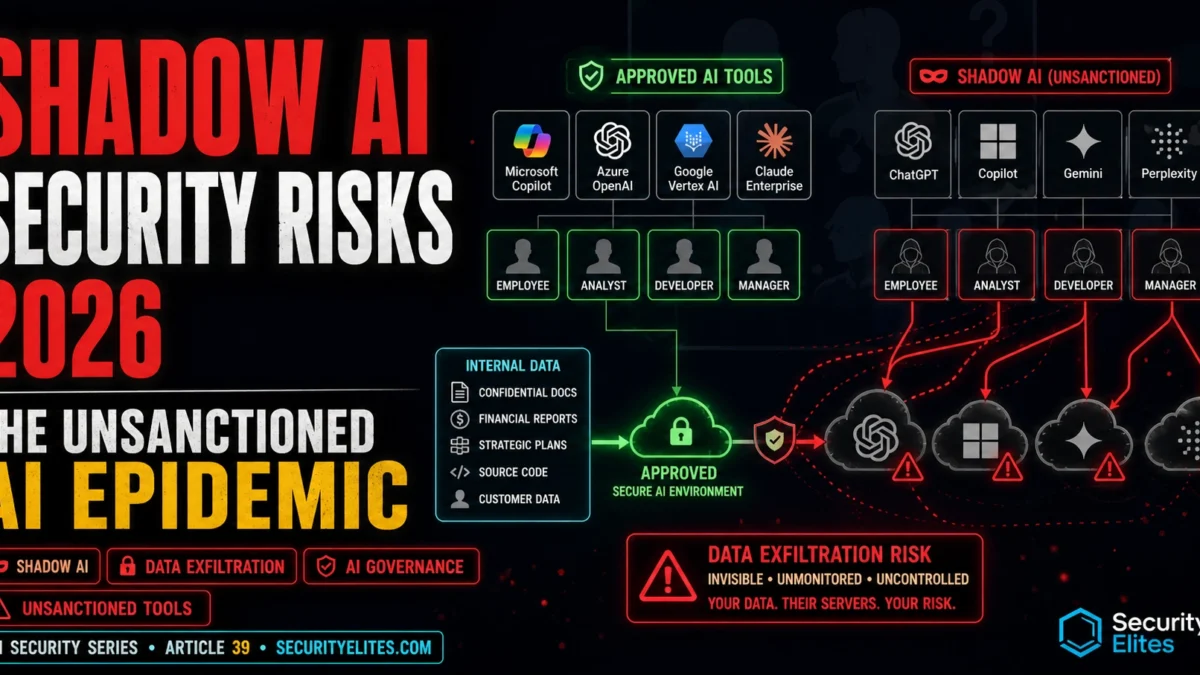

The technical controls I prioritise for high-risk shadow AI focus on the data paths, not the tools. Every shadow AI governance programme I’ve helped build starts with the same principle: visibility before restriction. My shadow AI discovery methodology combines technical discovery with employee surveys — you can’t address what you can’t see. I categorise shadow AI risks into three tiers based on the data sensitivity involved — it helps prioritisation when the problem feels overwhelming. Shadow AI risks cluster into four categories. Data exfiltration is the most immediate: sensitive documents, source code, customer data, and strategic information submitted to consumer AI platforms flow to external servers with data handling terms the organisation has not reviewed and agreed to. Unlike traditional data exfiltration, this is usually intentional from the employee’s perspective (they’re using a tool) but unintentional from a security perspective (they don’t understand the data flow).

Compliance violations are the most legally significant: submitting personal data to AI providers without Data Processing Agreements in place is a potential GDPR violation. Submitting patient data to a consumer AI tool is a potential HIPAA violation. These violations are created by individual employees making productivity decisions — not by deliberate policy choices — which makes them difficult to prevent without either governance frameworks or technical controls.

Credential and secret exposure is the most technically dangerous: source code pasted into AI assistants frequently contains API keys, database passwords, and internal service credentials in comments or configuration. An employee asking an AI coding assistant to review their code may inadvertently submit credentials that appear in the code context. The credentials then exist in the AI provider’s conversation logs with whatever data retention and access controls apply to that account tier.

Free

Paid

Enterprise

Critical

High

Low (DPA)

Critical

High

Medium

Critical

High

Medium

Low

Low

Low

Shadow AI Discovery Methodology

Shadow AI discovery combines passive traffic analysis (what AI endpoints are corporate devices connecting to?) with active assessment (what data is being submitted to those endpoints?). Network proxy and DNS logs are the starting point: connections to known AI provider domains (api.openai.com, claude.ai, api.anthropic.com, gemini.google.com, copilot.microsoft.com) from corporate devices reveal the footprint of shadow AI usage without monitoring content.

DLP (Data Loss Prevention) rules add the content dimension: rules matching sensitive data patterns (document fragments, PII, code signatures) in outbound requests to uncategorised or AI-provider domains identify high-risk shadow AI submissions. Browser extension audits add another dimension: extensions with “read all page content” permissions can access authenticated internal web applications — an AI browser extension installed by an employee can read their internal HR system, financial application, or customer database as they browse.

⏱️ 15 minutes · Browser only

Reading the actual data policies of the AI providers your employees are most likely using is the foundational step in shadow AI risk assessment — the policy tells you what actually happens to submitted data, which determines the risk level of each usage pattern.

Go to: openai.com/policies/privacy-policy and help.openai.com

Search: “OpenAI data retention training opt-out enterprise vs consumer”

Answer: Does free-tier ChatGPT use conversations for training by default?

Answer: What is the difference between consumer and enterprise data handling?

Answer: How long is conversation data retained?

Step 2: Read Anthropic’s current data policies for Claude

Go to: anthropic.com/privacy

Does Claude.ai (consumer) use conversations for model training?

What data handling guarantees does Claude for Business provide?

Step 3: Check Google Gemini data policies

Search: “Google Gemini data retention training consumer vs workspace 2025”

What are the differences between personal Google account and Workspace usage?

Step 4: Estimate your organisation’s shadow AI footprint

For each of 5 common employee roles at a typical organisation:

Developer, Finance analyst, Legal, HR, Marketing

What AI tools are they most likely using?

What type of data are they most likely submitting?

What is the risk level (Critical/High/Medium/Low) for each combination?

Step 5: Find GDPR and HIPAA guidance on AI tool use

Search: “ICO guidance AI tools employee data 2024 2025”

Search: “HIPAA AI tool employee use patient data”

What do regulators say about employee use of consumer AI tools with regulated data?

📸 Share your 5-role shadow AI risk estimate table in #ai-security.

AI Provider Data Policies — What Actually Happens to Your Data

AI provider data policies are the one area I always audit in detail before an organisation approves any AI tool for employee use. The practical answer to “what happens to data submitted to consumer AI tools” varies by provider, account tier, and configuration — and changes over time as providers update their terms. The consistent pattern: enterprise tiers provide stronger data handling guarantees (no training use, defined retention periods, DPA availability) than consumer tiers. The governance implication is that “use an enterprise tier” is a meaningful risk reduction, not just a compliance checkbox.

Training data opt-out is available from most major providers but requires explicit action from the user — and most consumer-tier users have never changed the default settings. An employee who signed up for free-tier ChatGPT in 2023 may be using defaults that have been updated multiple times since then. Shadow AI governance needs to account for this: even employees who think they’re using AI responsibly may be operating under data handling terms they haven’t reviewed since initial sign-up.

Building AI Governance That Works

AI governance that only says “don’t use unapproved AI tools” fails in practice because it creates a conflict between policy compliance and productivity that employees resolve by using personal devices and networks — making the risk invisible rather than eliminating it. Effective shadow AI governance provides legitimate alternatives alongside the restrictions: an approved enterprise-tier tool that meets the productivity need, clear guidance on what data is appropriate for which tools, and a fast-track approval process for new AI tools.

The approved AI tools list needs to be specific enough to be usable: “what AI tools can I use to summarise a contract?” should have a clear answer without requiring an escalation to the security team. Vague policies (“use AI tools responsibly”) don’t change behaviour because they don’t give employees the specific information they need to make compliant choices. Specificity in the approved tool list — which tools, for which data classifications, with which configuration requirements — is what makes governance actionable rather than aspirational.

⏱️ 15 minutes · No tools — risk analysis only

Thinking about shadow AI from a threat model perspective — as a potential data exfiltration channel — reveals the specific scenarios where the risk is most acute and the controls that address them.

An internal audit finds that employees are using AI tools as follows:

– 15 developers using GitHub Copilot (personal accounts, not enterprise)

submitting proprietary trading algorithm code

– 8 finance analysts using free ChatGPT to summarise earnings reports

and analyst notes (internal, pre-publication)

– 3 HR staff using Claude.ai (free tier) to draft performance reviews

containing employee personal data

– 12 sales staff using an AI email tool (browser extension) with

permission to read all page content

– 5 legal staff using free ChatGPT to summarise client contracts

THREAT MODEL TASK 1 — Rank by Risk

Rank these 5 shadow AI usage patterns from highest to lowest risk.

Justify each ranking: what specific harm could result?

TASK 2 — Immediate Action Items

For the top 2 highest-risk patterns: what action should the CISO

take in the next 7 days? (Be specific — not “implement a policy”)

TASK 3 — Regulatory Exposure

Which of these 5 patterns creates the most significant regulatory risk?

What specific regulation applies and what is the potential penalty?

TASK 4 — Browser Extension Threat

The AI email browser extension with “read all page content” permission:

What can it technically access as employees browse the corporate intranet?

What’s the worst-case scenario if this extension is malicious or compromised?

TASK 5 — Governance Response

Design a 30-day shadow AI governance response that:

– Reduces the immediate highest risks

– Doesn’t trigger employee resistance through blanket prohibition

– Can realistically be implemented in 30 days

📸 Share your risk ranking with justifications in #ai-security. Disagree with someone else’s order?

Technical Controls for High-Risk Shadow AI

Technical controls for shadow AI address the highest-risk patterns without requiring every employee to change their workflow. DLP rules that block submissions of confidential data patterns to uncategorised AI endpoints catch the highest-risk shadow AI usage — source code with credential patterns, documents matching confidential classification templates, data matching PII format patterns — without blocking all AI access.

Browser extension policies are the most direct control for the extension risk vector: a managed device policy restricting extensions to an approved list eliminates the all-page-read AI extension risk entirely. Web filtering that blocks consumer AI platforms where approved enterprise alternatives are available creates a natural channel towards sanctioned tools. CASB solutions provide the most comprehensive visibility — they show not just which AI providers employees are accessing, but what data volume is flowing to each, enabling risk prioritisation before implementing restrictive controls.

⏱️ 15 minutes · Browser only

A usable approved AI tools list is a concrete governance deliverable — it tells employees what they can and can’t use, for what data, without requiring security escalation for every AI tool decision. Building the template now makes it immediately applicable to your own organisation.

Search: “AI tool governance framework enterprise 2025”

Search: “NIST AI Risk Management Framework AI tools employees”

What classification structures do existing frameworks use?

Step 2: Define your data classification tiers

For a typical organisation, define 4 data tiers:

Public | Internal | Confidential | Restricted

For each: give 2 examples of data in that tier.

Step 3: Build an approved tool table

Create a table with columns: Tool | Tier | Data Classifications Allowed | Config Required | Notes

Fill in rows for: ChatGPT Enterprise, Claude for Business, GitHub Copilot Business,

Microsoft Copilot (M365), and one tool to list as “Not Approved”.

For each: which data tiers are allowed? What configuration is required?

Step 4: Write a one-paragraph employee-facing policy statement

The statement should:

– Tell employees what AI tools they can use for what data

– Not require security escalation for the most common use cases

– Be understandable without a security background

– Be specific enough to cover the most common shadow AI patterns

Step 5: Define fast-track approval criteria

What criteria would allow a new AI tool to be fast-track approved in <5 business days?

(Not full security assessment — just minimum bar to be provisionally approved)

📸 Share your approved tool table and employee-facing policy statement in #ai-security.

📋 Key Commands & Payloads — Shadow AI Security Risks 2026 — The Unsanctioned A

✅ Complete — Shadow AI Security Risks 2026

Shadow AI risk taxonomy, discovery methodology, AI provider data policies, effective governance frameworks, and technical controls. The governance principle: prohibition without alternatives drives risk underground. The risk model: the same AI tool at different subscription tiers has dramatically different risk profiles — governance should move employees from consumer tiers to enterprise tiers with appropriate data handling, not block AI use. Next Tutorial closes Day 8 with AI-powered password cracking — how machine learning is changing credential attack techniques.

🧠 Quick Check

❓ Frequently Asked Questions

What is shadow AI?

What are the specific security risks of shadow AI?

Do AI providers train on data submitted via consumer accounts?

How do I discover shadow AI in my organisation?

How should organisations respond to shadow AI?

What is the GDPR risk of employees using consumer AI tools?

Insecure AI Plugin Architecture Attacks

AI Password Cracking 2026

📚 Further Reading

- AI Supply Chain Attacks 2026 — the supply chain context for shadow AI: how unsanctioned AI tools become entry points for supply chain compromise when they process proprietary code or data that flows back to the provider’s training pipeline.

- Autonomous AI Agents Attack Surface 2026 — shadow AI agents specifically: when employees deploy autonomous AI agents without governance, the agent’s action surface becomes an extension of the shadow AI risk profile.

- Insecure AI Plugin Architecture Attacks 2026 — the plugin/extension risk that compounds shadow AI: AI browser extensions with broad permissions are the most acute technical risk in the shadow AI toolkit, with direct access to internal authenticated systems.

- NCSC — AI and Cyber Security: What You Need to Know — UK National Cyber Security Centre guidance on AI security including advice on evaluating AI tools for organisational use — a government-authoritative reference for AI governance conversations with senior leadership.

- NIST AI Risk Management Framework — The NIST AI RMF provides the formal risk management structure that shadow AI governance programmes should align to — the Govern, Map, Measure, Manage framework is directly applicable to AI tool assessment and approval workflows.