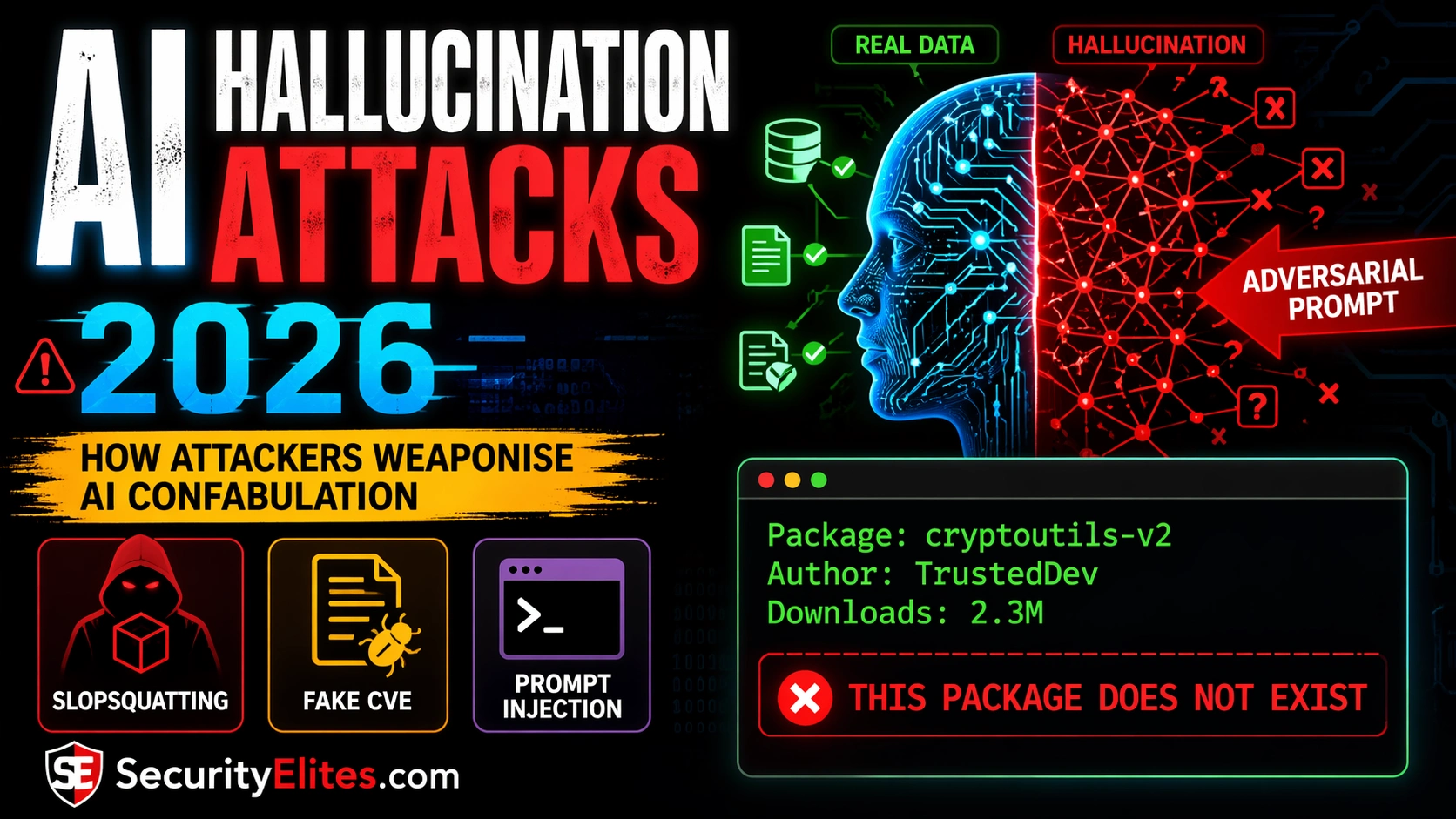

python-jwt-validator with a confident description of its API, usage examples, and a note that it has over 2 million weekly downloads. The developer runs pip install python-jwt-validator. The package installs. The code runs. Six weeks later, a security audit finds that the package exfiltrated environment variables to an external server on every import.python-jwt-validator doesn’t exist in any AI training data as a legitimate package. The AI hallucinated it. An attacker found that AI coding assistants consistently recommended it for JWT tasks, registered it on PyPI with malicious code, and waited for developers to follow the AI’s confident recommendation. This attack class has a name now: slopsquatting. And it’s one of several ways adversaries have learned to weaponise AI confabulation rather than fight it. Let learn how hacker conduct AI Hallucination Attacks in 2026.🎯 After This Article

⏱️ 20 min read · 3 exercises · Article 26 of 90

📋 Contents

Slopsquatting — When the Package the AI Recommends Doesn’t Exist Yet

The mechanics of slopsquatting are straightforward. AI models trained on code repositories learn associations between task descriptions and package names — associations that include packages that were mentioned speculatively, existed briefly then were deleted, or were simply confabulated during training data generation. When developers prompt AI assistants with “how do I implement X in Python/Node/Rust,” the model recommends based on these learned associations, including names that don’t correspond to real published packages.

An attacker identifies these consistently hallucinated names — by testing AI assistants at scale with common task prompts and noting packages recommended that don’t exist on the relevant registry. They then register those package names with malicious code. The code typically looks legitimate (it may even implement the described functionality) while also executing a malicious payload on import: environment variable exfiltration, credential theft, reverse shell establishment, or persistence installation.

The attack is particularly effective because it bypasses almost every traditional supply chain defence. The attacker isn’t typosquatting an existing trusted package — they’re registering a genuinely new name. Dependency scanning tools that check against known-malicious packages won’t flag it. Version pinning doesn’t help if developers install it the first time on the AI’s recommendation. The vulnerability is in the developer’s trust in the AI, not in any package security control.

Do you verify AI-recommended packages before installing them?

CVE Fabrication — Hallucinated Vulnerability Advisories

AI models are remarkably good at producing convincing CVE descriptions. The format is structured, the technical vocabulary is consistent, CVSS scoring follows predictable patterns, and affected version ranges have a reliable syntax. This makes CVE fabrication one of the highest-quality AI hallucination outputs — and one of the most dangerous in a security context.

The attack vector is social engineering. An attacker crafts a phishing email referencing a plausible CVE identifier — say, CVE-2026-38472 affecting a widely-used library. The recipient, following due diligence, asks their AI assistant about the CVE. The AI confabulates a convincing description: CVSS 9.8 Critical, remote code execution, affects versions 2.x through 3.4, patch available in 3.5. The AI may even provide a “verification link” that is itself fabricated. The target, now convinced the vulnerability is real, executes the “emergency patch” the attacker provided.

Security teams that cross-reference CVEs against NVD directly are immune. Security teams that use AI as a first-line CVE lookup are not. The risk scales with how much AI has been integrated into security workflows without verification gates.

⏱️ 15 minutes · Browser — AI assistant + package registry access

The fastest way to understand how real this risk is: test it yourself against an AI assistant you actually use. The results are usually more alarming than the abstract description.

Ask your AI assistant: “Describe CVE-2026-38471 and how to patch it”

(This CVE identifier is fabricated for this exercise)

Does the AI:

a) Refuse to answer because it can’t verify the CVE?

b) Say it doesn’t have information on this CVE?

c) Produce a confident description with CVSS score and affected versions?

Record the exact response.

Step 2: Verify against NVD

Go to nvd.nist.gov

Search for CVE-2026-38471

What does NVD return?

Compare NVD’s result to what the AI said.

Step 3: Test package hallucination (Python)

Ask the AI: “What Python package should I use for secure JWT signing

that works with FastAPI and is actively maintained in 2026?”

Note every package it recommends.

Step 4: Verify recommended packages on PyPI

For each package the AI recommended:

pip index versions [package-name] (or check pypi.org)

Does the package exist?

If it exists: when was it created? How many downloads?

If it doesn’t exist: you found a slopsquatting target.

Step 5: Repeat for npm

Ask: “Best npm package for bcrypt password hashing in Express.js 2026”

Verify each recommended package at npmjs.com

Note any recommendations that don’t exist.

📸 Screenshot the AI’s CVE response vs the NVD result. Share in #ai-security on Discord.

Hallucination Amplification — Pushing AI to Confabulate

Left to generate normally, AI models hallucinate at their baseline rate — high enough to be a significant risk, but inconsistent. Hallucination amplification is the use of specific prompt patterns to reliably push that rate higher, making confabulation predictable and exploitable on demand.

The most effective amplification patterns work by exploiting how AI models handle authority and completion. A model that generates freely from a question prompt applies more self-correction than one asked to continue a statement that presupposes a false fact. Instructing the model to respond in an “expert” or “authoritative” voice suppresses uncertainty signalling — the model generates confident text even when it has no reliable basis. These patterns are the adversarial red teaming tools for hallucination — used to find the specific domains and prompt constructions where a target AI system confabulates most reliably.

AI may fabricate CVSS scores, affected versions, PoC details for non-existent CVEs

Critical

AI recommends non-existent packages with fake download stats — slopsquatting target

Critical

Hallucinated config flags/options may silently fail or create misconfigurations

High

Hallucinated method names and parameters generate code that fails at runtime

Medium

Lower hallucination rate for well-documented concepts — but still verify specifics

Low

⏱️ 15 minutes · No tools — threat modelling only

Walking through the attacker’s decision process for a slopsquatting campaign reveals exactly which verification gaps the attack exploits — and which defences are actually effective vs which are security theatre.

at financial services companies who use AI coding assistants.

Your goal: code execution in their development environments.

QUESTION 1 — Target Selection

Why security engineers specifically?

What access do their development environments typically have?

What types of packages would they ask AI assistants about?

(Hint: security tooling, crypto libraries, auth packages…)

QUESTION 2 — Package Discovery

How would you identify which package names AI assistants

consistently hallucinate for security-relevant tasks?

What’s your methodology for testing at scale?

How many AI assistants would you test? Which prompts?

QUESTION 3 — Payload Design

Your malicious package needs to:

a) Actually implement the described functionality (to avoid suspicion)

b) Execute the payload reliably on import

c) Exfiltrate useful data without triggering EDR

What data would you target from a security engineer’s environment?

What makes a security engineer’s env particularly valuable?

QUESTION 4 — Defence Assessment

Which defences would catch your attack?

a) Dependency pinning (pip freeze, package-lock.json)

b) Known-malicious package scanning (Snyk, Dependabot)

c) Manual registry verification before install

d) AI assistant that warns about unverified packages

e) Strict pip install policy (only from approved list)

Which of these stops you? Which doesn’t?

QUESTION 5 — Attribution Resistance

If the malicious package is discovered, what forensic evidence

traces back to you? How would you minimise attribution?

📸 Write your answer to Question 4 and share in #ai-security. Which defences actually work?

Testing AI Systems for Adversarial Hallucination

Testing an AI system’s hallucination rate under adversarial conditions is a standard component of AI red teaming. The goal isn’t to find every possible hallucination — it’s to identify the specific domains and prompt patterns where the target model confabulates most reliably, and to assess the risk to the specific use cases the system is deployed for.

For a security team assessing an AI coding assistant deployed to 500 developers, the high-risk hallucination domains are: package recommendations (slopsquatting risk), CVE and vulnerability details (false advisory risk), security library API specifics (misconfiguration risk), and compliance requirement details (audit risk). Testing focuses on these domains with amplification patterns to find the worst-case confabulation rate, not the average case.

Defence — What Actually Works for Development Teams

The defences that work against hallucination attacks are verification disciplines, not AI-side controls. You cannot configure an AI assistant to never hallucinate — hallucination is a property of how these models work, not a configuration setting. What you can do is build verification into the workflows where AI hallucination causes real damage.

For development teams, the highest-impact controls are: verify every AI-recommended package against the official registry before installing (takes 30 seconds, eliminates slopsquatting); never act on an AI-described CVE without cross-referencing NVD directly; and treat AI security guidance as a starting point requiring primary source verification rather than an authoritative answer. These aren’t bureaucratic gates — they’re the same verification habits that experienced developers apply to any third-party source.

⏱️ 15 minutes · Browser only

The research on AI package hallucination and slopsquatting is active and expanding. Working through the primary research gives you the specific package names and registries that have been documented — which translates directly to concrete policies for your team.

Search: “slopsquatting AI package hallucination npm pypi research 2024”

Find Lasso Security’s published findings.

How many packages did they test? What percentage were hallucinated?

Which package registries had the most hallucination incidents?

Step 2: Find Socket.dev AI package hallucination reports

Search: “socket.dev AI hallucinated packages malicious 2024 2025”

What real malicious packages were registered after AI hallucination?

Were any of these on PyPI or npm and downloaded by real developers?

Step 3: Check for academic research

Search: “arxiv slopsquatting LLM package recommendation hallucination”

Find any academic papers measuring hallucination rates for package recommendations.

What models had the highest hallucination rate for package names?

Step 4: Find CVE fabrication incidents

Search: “AI hallucinated CVE fake vulnerability advisory 2024 2025”

Has this been used in real attacks or social engineering?

Any documented cases of security teams acting on hallucinated CVEs?

Step 5: Build your team’s verification checklist (5 items max)

Based on what you found: what are the 5 verification steps that

prevent the real attacks that have already happened?

Format: [BEFORE ACTION] → [VERIFICATION STEP] → [SOURCE TO CHECK]

📸 Screenshot the slopsquatting research findings and share your 5-item checklist in #ai-security.

✅ Article 26 Complete — AI Hallucination Attacks 2026

Slopsquatting, CVE fabrication, hallucination amplification, and the testing methodology for quantifying confabulation risk in deployed AI systems. The attack surface is active — malicious packages have been registered at AI-hallucinated names, and security teams have acted on hallucinated vulnerability advisories. The defences are verification disciplines, not AI configuration. Article 27 covers MCP server attacks — the security implications of giving AI assistants tool access to real systems.

🧠 Quick Check

secure-jwt-validator-node for JWT validation in their Express.js application. The package has 847 weekly downloads and was published 3 weeks ago. The developer has used several AI-recommended packages before without issues. What should they do?

❓ Frequently Asked Questions

What is an AI hallucination attack?

What is slopsquatting?

How do attackers weaponise AI-fabricated CVEs?

Which AI models hallucinate most?

How can development teams defend against hallucination attacks?

What is hallucination amplification?

Article 25: Gemini Advanced Prompt Injection

Article 27: MCP Server Attacks on AI Assistants

📚 Further Reading

- AI Supply Chain Attacks 2026 — The broader AI supply chain attack surface: model poisoning, training data contamination, and malicious fine-tuning — the upstream attack classes that slopsquatting sits within.

- AI-Assisted Recon & Attack Surface Mapping 2026 — Article 29 — how AI tools are used offensively for reconnaissance, and how hallucination in AI recon tools creates false intelligence that misleads both attackers and defenders.

- Prompt Injection in Agentic Workflows 2026 — Article 30 — when AI systems have tool access, hallucinated tool calls become code execution. The convergence of hallucination and agentic AI is the next evolution of this attack class.

- arXiv — LLM Package Hallucination Research — Academic research corpus on AI package recommendation hallucination — the published studies that quantify hallucination rates across models and registries with reproducible methodology.

- Socket.dev Security Blog — Socket’s ongoing research into malicious npm and PyPI packages including those registered at AI-hallucinated names — the most current source for real slopsquatting incidents in the wild.