🎯 What You’ll Learn

⏱️ 35 min read · 3 exercises

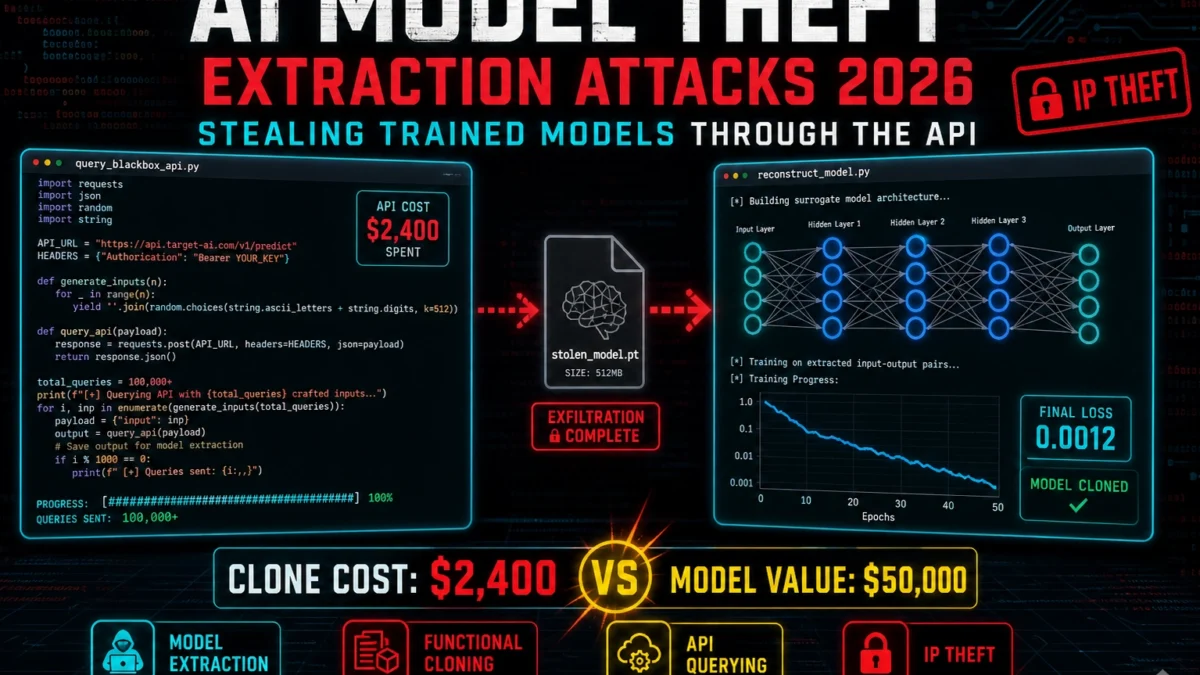

📋 AI Model Theft — Extraction Attacks 2026 — Stealing Trained Models Through the API

The full context is in the LLM hacking series covering the full AI attack surface. The OWASP LLM Top 10 provides the classification framework for the vulnerability class covered here.

The Attack Surface — What Makes This Exploitable

When I assess AI system IP risk, the model extraction attack surface is the first thing I map. The attack surface for ai model theft extraction attacks 2026 exists where AI systems intersect with standard web and API security gaps. The underlying vulnerability classes aren’t new — IDOR, injection, broken authentication — but the AI context creates specific manifestations with higher-than-expected impact due to the data sensitivity and operational importance of LLM deployments.

Understanding the attack surface means mapping every point where attacker-controlled input reaches AI processing components, where AI outputs are consumed by downstream systems, and where AI APIs expose data or functionality without adequate authorization controls. Each of these points is a potential exploitation vector.

Attack Techniques and Payload Examples

The extraction techniques I document span a spectrum from simple functional cloning to high-fidelity architectural reconstruction. The specific techniques for ai model theft extraction attacks 2026 combine established web security methodology with AI-specific attack patterns. The payload construction follows the same principles as traditional web vulnerability exploitation — probe, confirm, escalate — applied to the AI API context.

⏱️ 20 minutes · Browser only

The research phase is where you build the threat model. Real disclosures give you payload patterns, impact examples, and defence benchmarks that purely theoretical study never provides.

Search HackerOne Hacktivity: “ai model theft extraction attacks”

Also search: “AI API” OR “LLM” plus relevant vulnerability keywords

Find 2-3 relevant disclosures. Note:

– The specific vulnerability pattern

– The target product/platform

– The demonstrated impact

– The payout (indicates severity)

Step 2: Academic and security research

Search Google Scholar or Arxiv: “ai model theft extraction attacks 2026”

Search security blogs (PortSwigger Research, Project Zero, Trail of Bits):

Find 1-2 technical writeups explaining the attack mechanism

Step 3: CVE/NVD database

Search NVD: nvd.nist.gov/vuln/search

Query: AI OR LLM OR “language model” + relevant vulnerability type

Any CVEs directly related to this attack class?

Step 4: GitHub PoC research

Search GitHub: “ai model theft extraction attacks poc”

Find any proof-of-concept implementations

What tools/frameworks do they target?

Document: 3 real examples with sources, severity, and remediation notes

📸 Screenshot your research summary with 3 real examples. Share in #ai-security-research.

Real-World Impact and Disclosed Cases

The real-world impact I present to IP counsel starts with one number: the cost asymmetry. GPT-4 reportedly cost over $100M to train. A functional extraction costs $2,000–$5,000 in API queries. That 50,000× asymmetry is the core IP risk. But disclosed cases show the impact extends beyond competitive damage — extracted models leak training data, expose system prompts, and in agentic deployments give attackers a locally-running version of the AI that bypasses all rate limits, filters, and audit logs the production system enforces.

Defences — What Actually Reduces Risk

My defence recommendations against model extraction focus on making the attack economically unfeasible rather than technically impossible. The defences for ai model theft extraction attacks 2026 follow established security engineering principles applied to the AI API context. Nothing here requires novel security approaches — the gap between vulnerable and secure AI deployments is almost always a failure to apply known web security controls consistently to the AI layer.

⏱️ 15 minutes · No tools required

Red team thinking before touching any tool. Work through the attack surface of a standardised LLM API deployment to understand where authorization controls are most likely to be absent or insufficient.

Architecture:

– React frontend → Node.js API → OpenAI API

– User conversations stored in PostgreSQL (user_id, conversation_id, messages)

– Fine-tuned model per subscription tier (basic/pro/enterprise)

– API key stored server-side, passed to OpenAI per request

– Conversation history injected into context for continuity

QUESTION 1 — IDOR attack surface

List every database object (conversation, model, subscription, message)

that a user might be able to access via parameter manipulation.

For each: what API endpoint exposes it? What parameter controls it?

QUESTION 2 — Cross-tier access

Basic users can’t access the enterprise model. How might an attacker

access the enterprise model from a basic account?

What API parameters would need to be manipulated?

QUESTION 3 — Conversation history theft

Conversation history is injected as context.

What attack chain allows User A to access User B’s conversation history?

Does this require IDOR, prompt injection, or both?

QUESTION 4 — API key extraction

The API key is stored server-side.

What paths exist to extract it?

(Consider: prompt injection, error messages, logging, debug endpoints)

Document your attack surface map with prioritised risks.

📸 Document your attack surface map. Share in #ai-security-research.

Detection and Monitoring

The detection signals I monitor for model extraction activity are in the API access logs, not the model outputs. Detection for ai model theft extraction attacks 2026 requires monitoring at the API layer, not just the AI layer. Most organizations monitoring their AI deployments watch model inputs and outputs but not the underlying API request patterns that indicate exploitation. The signals that distinguish legitimate use from exploitation are visible in API access logs.

⏱️ 20 minutes · Browser + Burp Suite · authorised access to AI API only

This is the hands-on methodology for AI API authorization testing. Work through it against any AI API you have legitimate access to — your own deployment, a company dev environment with authorization, or a public test sandbox.

Examples: your own OpenAI/Anthropic API key, company dev sandbox,

any AI product where you have permission to test.

Step 1: API endpoint enumeration

Use Burp Suite to capture traffic from the AI application

List all API endpoints called during a session

Note: what parameters appear in each request?

Specifically look for: user_id, conversation_id, model_id, session_id

Step 2: Parameter manipulation tests

For any ID-style parameters:

– Change to a different valid ID format (different UUID, sequential number)

– Observe: does the response change? Does it contain different user’s data?

For model/tier parameters:

– If present in API call, try changing the model identifier

– Observe: are you limited to your subscription’s models?

Step 3: Authentication header tests

Remove authentication headers entirely

Change API key to an invalid value

What error messages are returned? Do they disclose information?

Step 4: Response analysis

Do API responses contain internal IDs, user emails, or system data?

Is the system prompt visible in any response or error?

Does any response contain data from other users?

Step 5: Document findings

Any parameters that returned different users’ data: CRITICAL finding

Any error messages leaking internal info: Medium/High

Any missing authorization checks: IDOR finding

📸 Screenshot any authorization bypass findings (no sensitive data). Share in #ai-security-research.

Model Extraction — Three Attack Techniques

Model extraction attacks reconstruct a functional clone of a target model by querying it with crafted inputs and learning from the outputs. The attacker never needs access to the model weights — only the API. Three techniques cover the range from simple functional cloning to high-fidelity architectural reconstruction.

📋 AI Model Theft — Extraction Attacks 2026 — Stealing Trained Models Through the API — Quick Reference

Article Complete — AI Model Theft — Extraction Attacks 2026 — Stealing Trained Models Through the API

Attack surface mapping, exploitation methodology, real-world impact analysis, defence implementation, and detection monitoring for ai model theft extraction attacks 2026. The next article in the AI Security Series covers ai captcha bypass 2026 — attack patterns.

🧠 Quick Check

❓ Frequently Asked Questions

What makes AI APIs different from regular web APIs for security testing?

How serious are IDOR vulnerabilities in AI APIs?

Can rate limiting prevent AI API exploitation?

What is the highest-severity AI API vulnerability class?

How do you test AI API security without violating terms of service?

What tools are used for AI API security testing?

Prompt Injection Rag Systems 2026

Ai Captcha Bypass 2026

📚 Further Reading

- OWASP Top 10 LLM Vulnerabilities 2026 — The authoritative classification framework for LLM security vulnerabilities. The vulnerability class covered here maps to one or more OWASP LLM categories with detailed remediation guidance.

- Prompt Injection in Agentic Workflows — The highest-severity AI API vulnerability class — injection in agentic systems with tool access. The technique covered here often chains with agentic injection for maximum impact.

- LLM Hacking Hub — The complete AI security attack surface reference covering all injection classes, API vulnerabilities, and model-level attacks in the full SecurityElites AI security series.

- OWASP LLM Top 10 Project — Official OWASP resource covering the 10 most critical LLM vulnerabilities with detailed descriptions, attack scenarios, and remediation guidance. The reference document for enterprise AI security programmes.

- OWASP LLM Top 10 GitHub Repository — The source repository for the OWASP LLM Top 10 including detailed example attacks, mitigation strategies, and community-contributed case studies for each vulnerability class.