What You’ll Learn

⏱️ 12 min read

PROMPTFLUX — AI Malware Guide 2026

PROMPTFLUX represents the offensive convergence of the LLM capabilities I covered in What Is an LLM? with the adversarial ML techniques from Adversarial Machine Learning 2026. For the full AI malware picture including how AI is used to write malware, see Can AI Write Malware?

What PROMPTFLUX and PROMPTSTEAL Are

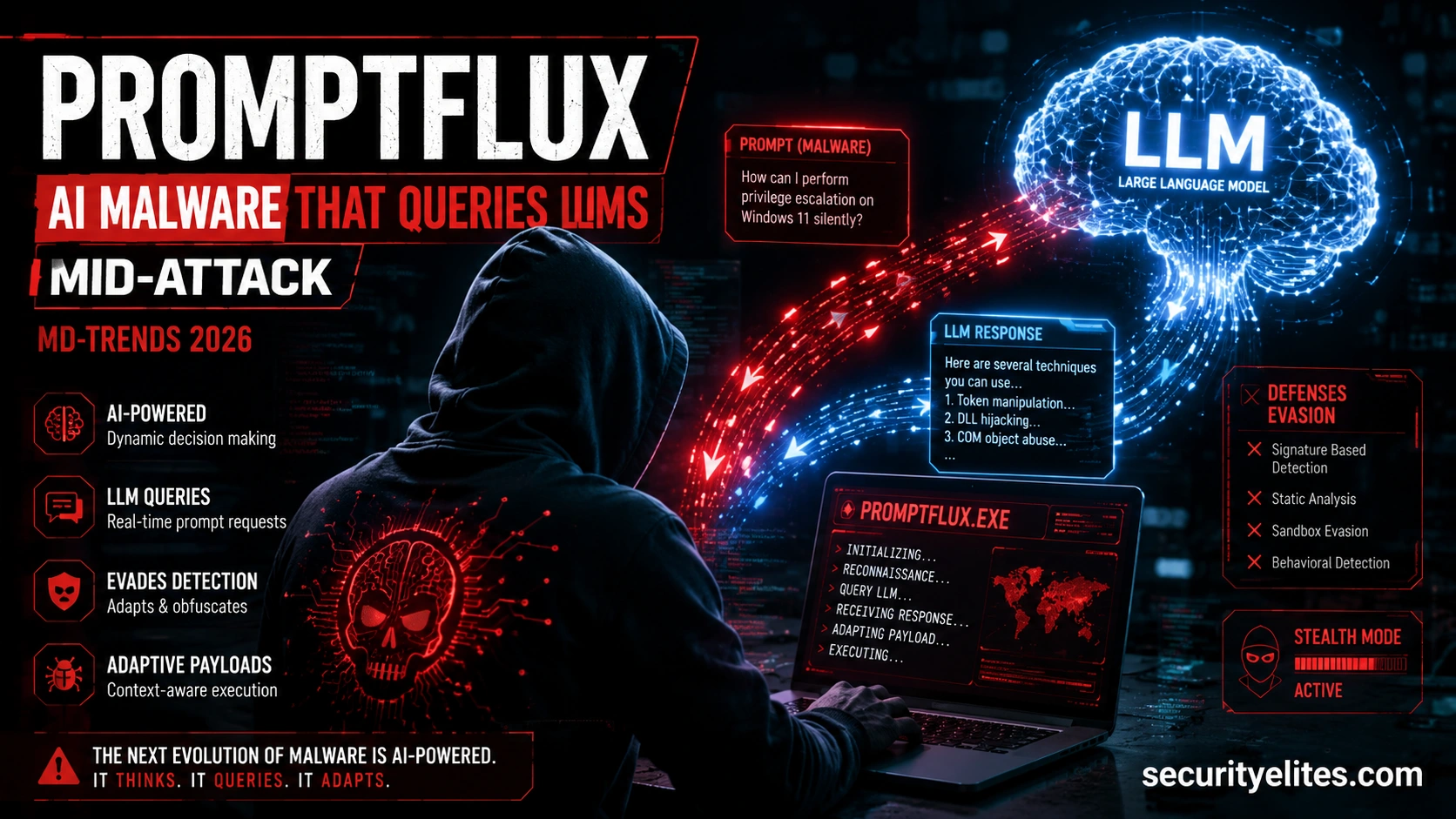

The key distinction I want to establish immediately: PROMPTFLUX is not malware written by AI. It is malware that uses AI during its execution. That’s a fundamentally different threat category. Traditional AI-generated malware (what IBM calls “Slopoly”) uses AI at the development stage — a human uses an LLM to help write malicious code, then deploys it. PROMPTFLUX and PROMPTSTEAL query LLMs during the attack itself, in real time, to make dynamic decisions about how to proceed.

How LLM-Querying Malware Works

My model for how LLM-querying malware evades detection by using AI during execution. The key insight is that the malware’s attack behaviour is not fixed at compile time — it’s generated at runtime by an external AI. This means every execution in a different environment can produce a different behaviour profile, which is precisely what defeats the detection approaches defenders currently rely on. The malware doesn’t have a fixed behaviour — it makes API calls to an LLM and uses the response to decide what to do next. This is adversarial use of the same flexibility that makes LLMs useful for legitimate software.

Why Signature Detection Fails

The adversarial ML and evasion concepts I covered in earlier guides — how AI classifiers can be fooled by carefully crafted inputs — come together in PROMPTFLUX in a way that makes the evasion more robust than any previous technique. Traditional malware evasion involves obfuscation — the code does the same thing but looks different. LLM-querying malware evasion involves adaptation — the code actually does something different based on the environment, and the AI determines what that different thing should be.

Design a detection strategy using what you now know about how it works.

1. THE LLM API CALL SIGNAL

PROMPTFLUX must make API calls to an LLM to function.

What network traffic does this generate?

How do you distinguish legitimate LLM API usage from malicious?

(Hint: what process on the machine is making the call? At what time? With what data?)

2. THE DATA EXFILTRATION SIGNAL

The malware sends environment data to the LLM (AV product name, OS version, network config).

What does this traffic look like?

Can you detect environment data being sent to an AI API?

3. THE RESPONSE IMPLEMENTATION SIGNAL

After getting the AI response, the malware implements the recommended evasion.

What happens immediately after an LLM API call?

Does the sequence of: LLM call → new behaviour constitute a detectable pattern?

4. YOUR DETECTION RULE

Write a plain-English EDR detection rule that would flag this behaviour.

Format: “Alert when [process] makes [API call] containing [data pattern] followed by [action]”

Slopoly — The Broader AI Malware Ecosystem

IBM’s X-Force team identified and named “Slopoly” — their internal term for AI-generated malware produced by generative AI tools — as a separate but related category. My framing: PROMPTFLUX is the sophisticated end of the AI malware spectrum (malware that uses AI at runtime), while Slopoly is the commoditised end (malware written by AI, deployed without AI). Both are increasing in volume and both compress the time from attack concept to deployment.

Defensive Adaptations for AI Malware

LLM API Endpoints to Monitor

My practical starting point for any organisation building PROMPTFLUX detection: here are the primary LLM API endpoints to add to your egress monitoring ruleset. Any process making requests to these endpoints that isn’t on your approved application list is a detection signal.

PROMPTFLUX — Key Points

PROMPTFLUX — Add to Your Detection Stack

One immediate action: add the major LLM API endpoints to your egress monitoring rules today. That single change starts building detection capability for this category. The Can AI Write Malware? guide covers the Slopoly end of the spectrum in full.

Quick Check

Frequently Asked Questions

What is PROMPTFLUX?

What is the difference between PROMPTFLUX and Slopoly?

How do you detect LLM-querying malware?

Can AI Write Malware? The Full Picture

How Hackers Attack AI Agents 2026

Further Reading

- Can AI Write Malware? 2026 — The full spectrum of AI-assisted malware including Slopoly, how AI-generated variants evade AV, and why behaviour-based detection remains the most effective defence.

- Adversarial Machine Learning 2026 — The detection evasion techniques that PROMPTFLUX applies to defender AI systems — how adversarial inputs cause security classifiers to misclassify malicious content.

- Agentic AI Security 2026 — The broader context of autonomous AI in attacks. PROMPTFLUX is one component of the agentic AI attack landscape documented in March-April 2026.

- Google Mandiant — M-Trends 2026 — The primary source naming PROMPTFLUX and PROMPTSTEAL. The full report covers AI-enabled attack acceleration across all phases of the attack lifecycle, with data from 500,000+ hours of frontline investigations.

- SecurityWeek — Cyber Insights 2026: Malware in the Age of AI — Expert analysis of the Slopoly malware economics and the broader AI-enabled malware landscape, including the cost-of-attack inversion cited above.