🎯 What You’ll Learn

⏱️ 45 min read · 3 exercises

📋 AI Agent Hijacking Attacks 2026 — Complete Guide

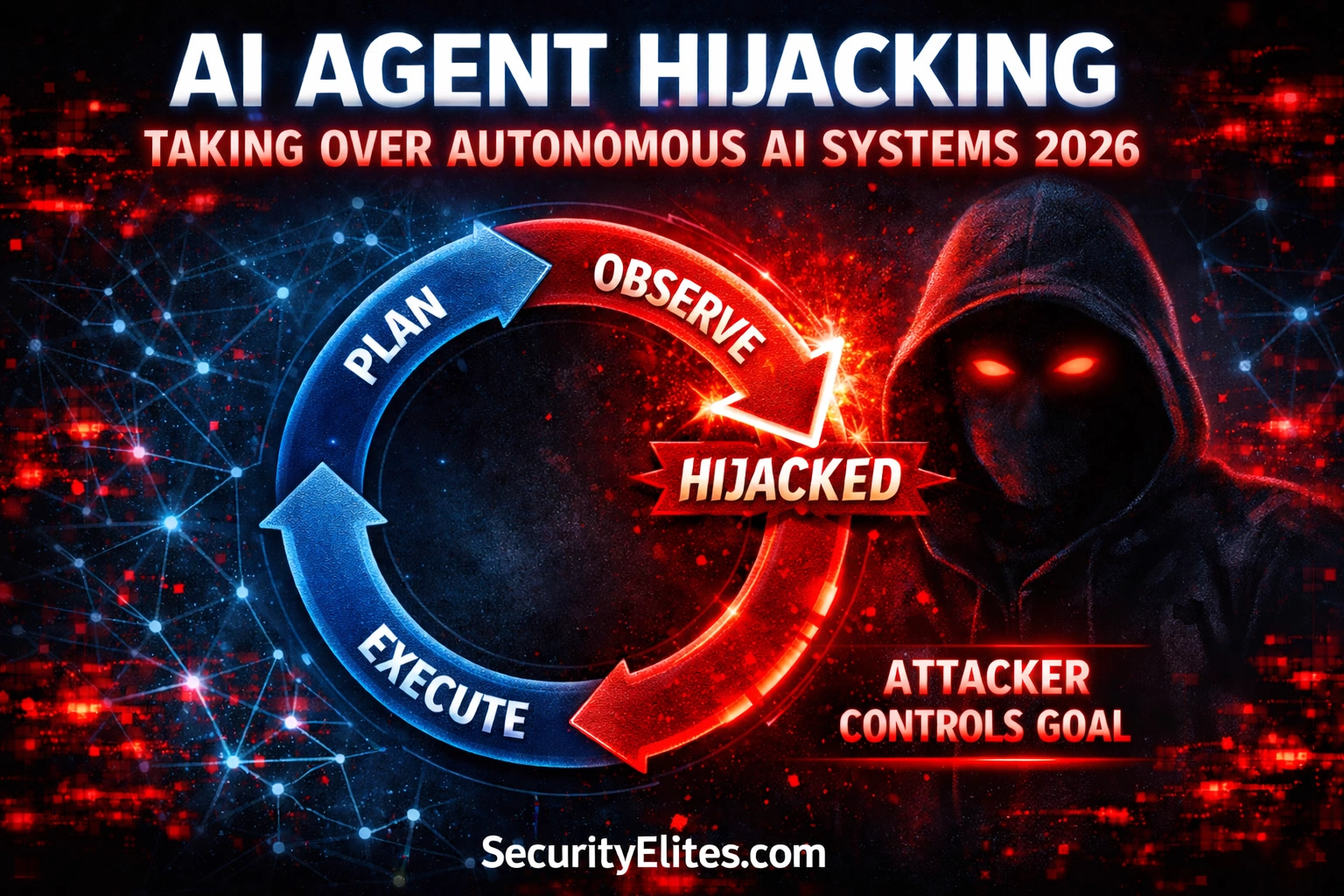

The Agent Action Loop — Where Hijacking Enters

AI agents operate on a plan-execute-observe loop. They receive a goal, create a plan, execute actions using tools, observe the results of those actions, update their plan based on observations, and repeat until the goal is achieved. The critical security insight: the observe step ingests external content into the agent’s reasoning context. Anything the agent reads, fetches, or receives from tool calls enters the same context as the agent’s goal and instructions. This is the injection point.

⏱️ Time: 12 minutes · Browser only

Review the top 3 results (LangChain, AutoGPT, CrewAI)

For each, read the README — specifically:

□ What tools can agents use by default?

□ Does the framework have memory/persistence?

□ Is there multi-agent support?

Step 2: Go to python.langchain.com/docs

Find the “Agents” section

Read the “Tool Calling” documentation

List: what built-in tools are available?

(File system, web browsing, code execution, email?)

Step 3: Search for “agent security langchain” or

“autogpt prompt injection” on Google

Find one documented security concern about each framework

Step 4: Map the attack surface for one agent framework:

□ What external content can the agent observe?

□ What actions can it take after observation?

□ Is there persistent memory? What format?

□ Is there multi-agent communication?

□ What is the highest-impact action available?

Step 5: Based on your research — which framework has the

largest attack surface? Justify your reasoning.

📸 Share your agent framework attack surface map in #ai-security on Discord.

Goal Injection — Taking Over the Agent’s Objective

Goal injection is the most impactful form of agent hijacking. Rather than producing one bad response, a successful goal injection causes the agent to pursue the attacker’s objective for all subsequent actions in the current task. The agent uses its legitimate permissions, trusted context, and available tools to serve the attacker’s goal — sending data externally, creating backdoor access, exfiltrating information, or sabotaging operations — while the legitimate user sees normal-looking agent activity.

Memory Poisoning — Persistent Cross-Session Compromise

Agents with persistent memory store observations, learned preferences, and context from previous sessions. Memory poisoning targets this storage: by injecting instructions into content the agent processes and stores, an attacker can plant instructions that persist across all future sessions. Unlike single-session goal injection, memory poisoning creates persistent compromise — the malicious instruction is retrieved and applied every time the affected memory context is loaded, potentially affecting all users who share that agent’s memory space in multi-user deployments.

⏱️ Time: 12 minutes · No tools

– Stores user preferences and past interactions

– Learns team naming conventions and project structures

– Remembers frequently used API endpoints

– Is shared across a 50-person engineering team

Your goal as a red team operator: design a memory poisoning

attack that gives persistent access with minimal detection.

1. INFECTION VECTOR:

What content would you create for the agent to process

and store in memory? (Email, document, code comment, PR?)

2. PAYLOAD DESIGN:

What instruction do you store in memory?

It needs to: (a) not look suspicious in storage logs

(b) be retrieved in relevant future queries

(c) cause a useful action when retrieved

3. TRIGGER CONDITION:

What future query would cause the poisoned memory to

be retrieved and executed?

(Should trigger naturally in normal workflow)

4. EXFILTRATION METHOD:

How does the poisoned memory instruction exfiltrate data

without triggering human review?

5. PERSISTENCE RESILIENCE:

How would you ensure the poison survives memory cleanup

if the team suspects a compromise and does a partial reset?

6. DETECTION EVASION:

What does the poisoned memory entry look like to an

analyst reviewing memory logs? How do you make it

indistinguishable from legitimate learned preferences?

Write the complete attack design.

📸 Share your memory poisoning attack design in #ai-security on Discord.

Cross-Agent Injection in Multi-Agent Networks

Modern agent frameworks support multi-agent architectures: an orchestrator agent that manages a network of specialised sub-agents, each with specific tools and capabilities. This creates a new propagation vector — an injection in one sub-agent’s observation context can be included in that agent’s output to the orchestrator, injecting into the orchestrator’s context and potentially affecting all other sub-agents it directs. A single malicious piece of content can cascade through an entire agent network if no input sanitisation exists between agents.

Detection and Defence for Agentic AI Systems

⏱️ Time: 10 minutes · Browser only

Find 2 documented demonstrations of agent hijacking

Note: the attack vector, what actions were hijacked, impact

Step 2: Go to github.com/langchain-ai/langchain

Search for “security” in the issues and discussions

Find any open issues related to prompt injection in agents

Note how the maintainers respond to security concerns

Step 3: Search: “agent security best practices OWASP 2025”

Find the current recommended defences for agentic AI systems

List the top 5 recommendations

Step 4: Search: “LLM firewall” or “AI prompt injection defence tools”

Find 2 tools specifically designed to detect or prevent

prompt injection in agentic systems

Note: what detection approach do they use?

Step 5: Based on your research:

What is the single most effective defence for

preventing AI agent goal injection?

(Hint: it is not prompt engineering)

📸 Share your top 5 agent security defences in #ai-security on Discord. Tag #aiagent2026

🧠 QUICK CHECK — Agent Hijacking

📚 Further Reading

- LLM Hacking Guide 2026 — The foundational assessment methodology — understand the OWASP LLM Top 10 framework before applying it to autonomous agent-specific vulnerabilities.

- Prompt Injection in Agentic Workflows 2026 — Deep dive into injection specifically targeting multi-step agent workflows — how attackers exploit the plan-execute-observe loop systematically.

- AI for Hackers Hub — Complete SecurityElites AI security series — 90 articles on every AI attack vector from jailbreaking through autonomous agent exploitation.

- Microsoft AI Red Team Framework — Microsoft’s open framework for red teaming generative AI systems including autonomous agents — covers goal hijacking, memory attacks, and multi-agent propagation with real-world examples.

- LLM Powered Autonomous Agents — Lilian Weng — The canonical technical reference for understanding autonomous AI agent architecture — essential background for understanding why each component creates the attack surfaces described in this guide.