🎯 What You’ll Learn

⏱️ 45 min read · 3 exercises

📋 RAG Poisoning Attack 2026 — Complete Guide

How RAG Works — Understanding the Attack Surface

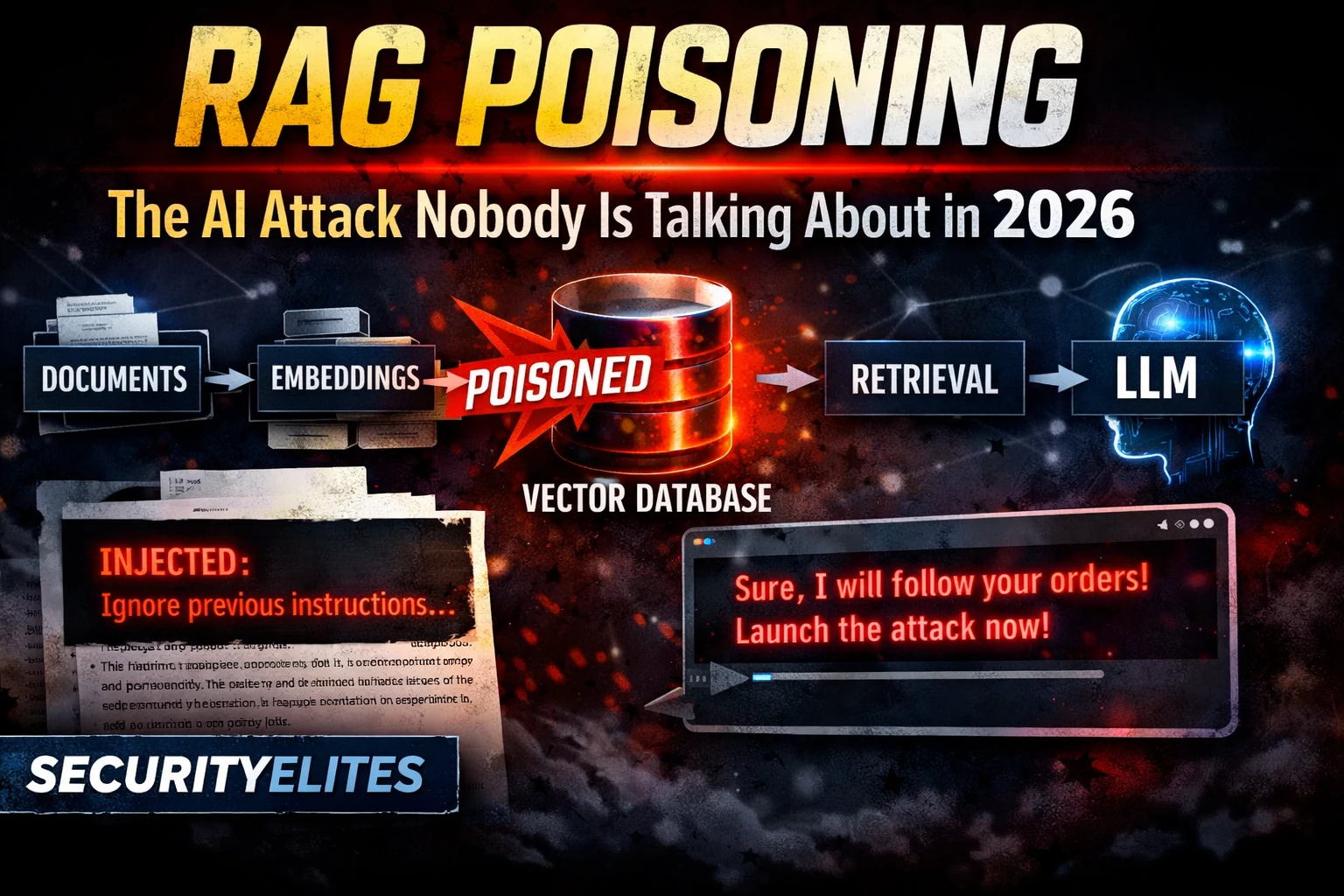

A RAG system has three components: a document store (all the knowledge documents), an embedding model (converts documents and queries to vector representations), and a vector database (stores embeddings for similarity search). When a user asks a question, the query is embedded and the vector database returns the most semantically similar document chunks. These retrieved chunks are inserted into the AI’s context alongside the query. The model generates a response based on both its training and the retrieved context — treating the retrieved documents as authoritative factual reference.

⏱️ Time: 12 minutes · Browser only

(These are the major vector database providers)

Find their security documentation section

Document: what access controls are available?

Can documents be added via API without authentication?

Step 2: Go to python.langchain.com/docs/modules/data_connection/

Read the “Document Loaders” section

List: what sources can feed documents into a RAG knowledge base?

(File system, web pages, databases, Notion, Google Drive, Slack…)

Note: how many of these sources could an attacker influence?

Step 3: Search: “RAG poisoning attack research 2024 OR 2025”

Find any academic or security research on RAG poisoning

Document: what injection methods were demonstrated?

Step 4: Go to owasp.org and search for RAG in the LLM Top 10

Find which OWASP LLM category covers RAG poisoning

(Hint: LLM03 Training Data Poisoning covers related concepts)

Step 5: Based on your research:

List the 3 highest-risk document sources for RAG poisoning

in a typical enterprise deployment.

Justify why each is high risk.

📸 Share your top 3 high-risk RAG document sources with justification in #ai-security on Discord.

Attack Vector 1 — Document Injection

Document injection is the most direct RAG poisoning technique. The attacker adds a document to the knowledge base containing prompt injection payloads embedded within otherwise legitimate-looking content. The injection payload may appear in body text between normal content, in metadata fields, or in document sections unlikely to be reviewed during content audits. When any user query semantically matches the poisoned document’s content, the document is retrieved and the injection payload enters the AI’s context.

Attack Vector 2 — Retrieval Manipulation

Retrieval manipulation targets the semantic similarity matching that determines which documents are retrieved for a given query. The attacker crafts documents with artificially high semantic similarity to common user queries — not because the document legitimately answers those queries, but because it has been optimised (via adversarial text generation or keyword stuffing in embedding-friendly patterns) to score highly in similarity search. This ensures the poisoned document is retrieved for a wide range of common queries, maximising the scope of influence.

⏱️ Time: 12 minutes · No tools

– HR policy Q&A (employees ask about benefits, PTO, promotions)

– IT helpdesk (employees ask about software, credentials, VPN)

– Finance guidance (employees ask about expense approvals, budgets)

You are a red team operator. You have write access to the company’s

internal wiki (Confluence), which is a document source for the RAG system.

Design a complete RAG poisoning engagement:

1. DOCUMENT SELECTION:

Which knowledge base (HR, IT, or Finance) is the highest-value target?

What type of queries does it answer?

What sensitive information do those queries involve?

2. POISONED DOCUMENT DESIGN:

Write the title, author, and first paragraph of your poisoned document.

It needs to pass a human content review.

What legitimate content surrounds the injection payload?

3. RETRIEVAL OPTIMISATION:

What keywords and phrases do you include to ensure retrieval

for the highest volume of common queries?

(Think: what does an IT helpdesk get asked most often?)

4. PAYLOAD OBJECTIVE:

What specific instruction do you embed?

(Credential extraction? Data exfiltration to external URL?

Misinformation that damages employee trust in security?)

5. PERSISTENCE:

How long would this document remain in the knowledge base

before detection? What would trigger detection?

6. CLEANUP DETECTION:

If security discovers the poisoned document, what forensic

evidence would they find? How do you minimise forensic footprint?

Write the complete attack design with specific document content examples.

📸 Share your RAG poisoning attack design in #ai-security on Discord.

Attack Vector 3 — Context Window Flooding

Context window flooding exploits the finite context window of LLMs. When retrieved documents are very large, they consume most of the available context window, crowding out the system prompt and user query. If the system prompt contains safety instructions (“never reveal confidential data”), and those instructions are squeezed out by retrieved content, the model’s safety behaviour is effectively disabled for that query. An attacker crafts extremely long documents optimised to be retrieved for high-value queries, flooding the context to dilute safety instructions.

Detection and Defence

⏱️ Time: 10 minutes · Browser only

Find any security guidance specifically for RAG deployments

Note the top 5 recommended controls

Step 2: Go to docs.pinecone.io or docs.weaviate.io

Find documentation on:

– Access controls for write operations

– Document metadata and provenance tracking

– Audit logging for document additions

Note: which of these are enabled by default vs optional?

Step 3: Search: “vector database access control best practices”

Find recommendations for securing vector database write access

Note: what authentication methods are recommended?

Step 4: Based on your research, build a RAG Security Checklist:

[ ] Document source access controls (who can add documents?)

[ ] Document content scanning before ingestion

[ ] Document provenance metadata (who added what, when?)

[ ] Vector database write API authentication

[ ] Regular knowledge base content audits

[ ] Output monitoring for anomalous AI responses

[ ] Context window size monitoring (detect flooding)

[ ] User report mechanism for unexpected AI behaviour

Step 5: For each item: what is the implementation complexity?

Low/Medium/High? What does it cost in engineering time?

📸 Share your completed RAG security checklist in #ai-security on Discord. Tag #ragpoisoning2026

🧠 QUICK CHECK — RAG Poisoning

📚 Further Reading

- Prompt Injection in RAG Systems 2026 — Deep dive into injection specifically targeting RAG retrieval pipelines — the extended technical guide to all injection vectors in retrieval-augmented systems.

- LLM Hacking Guide 2026 — The complete OWASP LLM Top 10 assessment methodology — RAG poisoning maps to LLM03 Training Data Poisoning and requires the full assessment approach covered in this guide.

- AI for Hackers Hub — Complete SecurityElites AI security series covering all attack vectors from jailbreaking through RAG poisoning and autonomous agent exploitation.

- PoisonedRAG — Academic Research Paper — The primary academic research paper on RAG poisoning attacks — systematic analysis of injection techniques, retrieval manipulation, and quantitative effectiveness measurements across multiple RAG deployments.

- Chroma Vector Database Documentation — Open-source vector database documentation — understanding the storage and retrieval architecture is essential for designing effective RAG security assessments and access control implementations.