What You’ll Learn

⏱️ 12 min read

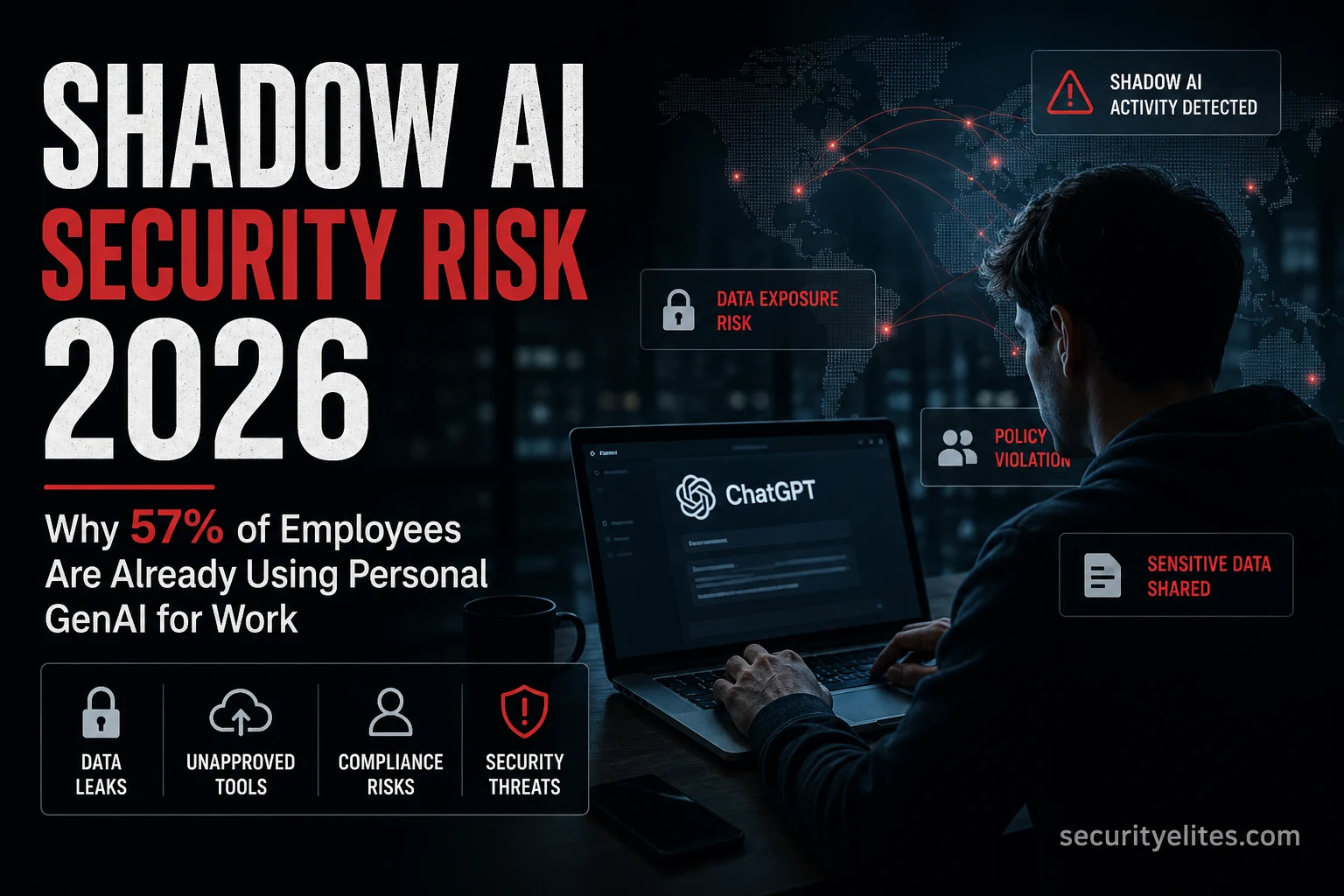

Shadow AI Security Risks 2026 — Complete Guide

Shadow AI is the employee-side manifestation of the data privacy risk I covered in Is ChatGPT Safe for Work? The Samsung incident is the canonical shadow AI case. For the approved AI governance framework that prevents shadow AI from becoming a liability, see Google SAIF.

What Shadow AI Is

Shadow IT is the well-established practice of employees using technology tools that haven’t been approved by their organisation’s IT or security team. Shadow AI is the same concept applied specifically to AI tools — employees using ChatGPT, Gemini, Claude, Perplexity, Midjourney, or any other AI service for work tasks without organisational approval or visibility. My framing for clients who think this is a niche problem: if your organisation has more than 10 employees and hasn’t explicitly communicated an AI policy, you almost certainly have shadow AI usage happening right now.

The Security Risks It Creates

Shadow AI creates three distinct risk categories that I assess separately because they require different controls. Data privacy risk, intellectual property risk, and compliance risk. The Samsung incident — three separate engineers pasting proprietary code into ChatGPT within 20 days — is the clearest single illustration of all three converging simultaneously.

How to Detect Shadow AI

My detection approach for shadow AI combines network monitoring, endpoint monitoring, and employee survey data. No single method gives a complete picture — organisations that rely solely on network monitoring will miss browser-based AI usage on personal devices, and organisations that only survey employees will miss the cases people don’t self-report.

What Policies Actually Work

My honest assessment of AI policies — and I have reviewed dozens of them at this point — based on what I see in client environments: long restrictive policies that say “never use AI for work” are ignored. Short, specific policies with clear green/amber/red guidance are followed. The goal isn’t zero AI usage — it’s controlled AI usage where the right tools are used for the right data. Trying to ban AI entirely in 2026 is equivalent to trying to ban the internet in 2005 — it doesn’t work and it creates a covert usage problem that’s harder to manage than an open one.

(Think: who handles source code, customer data, financial data, legal docs, strategy?)

Step 2: For each role, identify the top 3 work tasks where AI could help

(This is what they’re searching for AI tools to do)

Step 3: For each task, classify the data they’d likely enter

Use the green/amber/red framework above

Step 4: Identify the gap

For each amber/red task: is there an approved tool that covers this use case?

If no: that gap is your shadow AI risk — employees will use personal tools

Step 5: Design the policy response

For each high-risk task with no approved tool:

Option A: provide an approved tool for that specific use case

Option B: document the task as prohibited with a specific explanation why

Option C: accept the risk (document the decision)

Output: a one-page shadow AI risk map for your organisation

The Governance Framework

Shadow AI — Key Points

Shadow AI — Start With the Survey

Send an anonymous survey to your team this week asking which AI tools they use for work. The results will determine your actual shadow AI exposure far more accurately than any network monitoring can. The ChatGPT work safety guide gives employees the education they need to understand why the policy exists.

Quick Check

Frequently Asked Questions

What is shadow AI?

Why is shadow AI a security risk?

How do I detect shadow AI in my organisation?

What should an AI acceptable use policy include?

Is ChatGPT Safe for Work? Privacy Guide

Google SAIF — AI Security Programme

Further Reading

- Is ChatGPT Safe for Work? 2026 — The employee education piece. What ChatGPT and other consumer AI platforms actually do with submitted data, the Samsung incident in full, and the settings changes every employee should make.

- Google SAIF Framework — SAIF Principle 4 (harmonise platform-level controls) is the programme element that directly addresses shadow AI governance. The full SAIF scoring exercise applies here.

- ChatGPT vs Gemini vs Claude Security — Choosing the right approved AI tool for your organisation. The data policy comparison across all three platforms’ consumer and enterprise tiers.

- Gartner — Top Cybersecurity Trends 2026 — The primary source for the 57% and 33% shadow AI statistics, and the full Gartner context on why GenAI breaks traditional security awareness approaches.